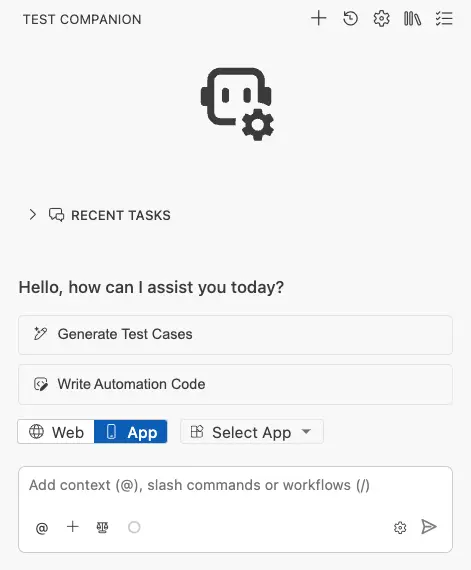

Generate test cases

Learn how to use Test Companion to automatically generate test cases for your mobile applications.

Test Companion is designed to generate structured and detailed test cases for your mobile app. You can either provide specific requirements or allow the AI to explore the app on a real device, and it produces comprehensive test cases grouped by scenario and ready to be reviewed, refined, synced to your test management system, and automated.

There are two ways to generate test cases:

- From requirements: Provide your requirements, user stories, UI snapshots, Jira links, Confluence links, or feature descriptions, and Test Companion will generate test cases based on that information.

- By exploring the app: Ask Test Companion to explore your app, and it will navigate through screens, capture UI data, and generate test cases based on the app’s actual behaviour and structure.

Before generating test cases, Test Companion creates an action plan that appears in the chat. This plan shows the AI agents’ approach, how they will break down your request into categories, and what types of test cases they will generate.

Generate from requirements

If you have a requirements document, user story, product requirements document (PRD), or feature description, you can share it with Test Companion to generate test cases. The AI will analyze your requirements and produce test cases specifically for mobile apps, considering platform-specific interactions such as gestures, device permissions, and changes in orientation.

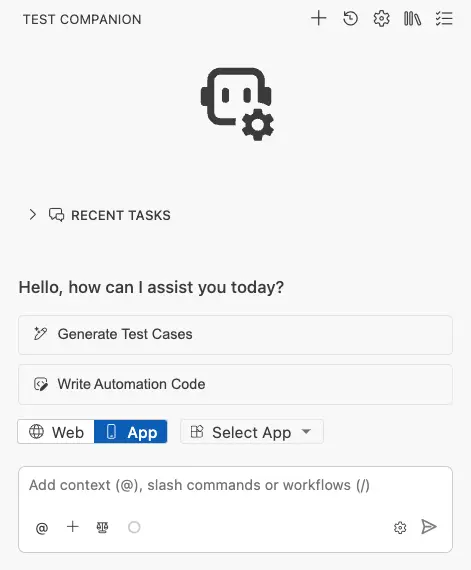

Follow these steps to generate test cases from requirements:

-

Ensure you are in App mode with your app connected.

- In the chat box, provide the AI with context. You can combine multiple methods:

- Attach a file: Click the + (add files & images) icon to attach your main PRD, spec, or image file.

- Paste text: Provide a clear prompt or directly paste your requirements, Jira links, Confluence links, or user story into the chat.

- Click the Send message icon.

Test Companion generates test cases organised by scenario.

Example prompt:

Generate test cases for the checkout flow based on the following PRD:

The user should be able to:

- Add items to the cart from the product listing page.

- View the cart and modify quantities.

- Enter a shipping address.

- Choose a payment method (credit card or PayPal).

- Place the order and see a confirmation screen.

Cover happy paths, negative cases (invalid card, empty cart), and edge cases (network interruption mid-checkout, session timeout).

Generate by exploring the app

If you do not have written requirements or if you prefer test cases based on the app’s actual behavior rather than documentation, you can use Test Companion to explore your app on a real device and generate test cases from its observations.

When you initiate this process, Test Companion launches your app on a BrowserStack device, navigates through the screens, captures UI hierarchy data and screenshots, and creates test cases that accurately reflect how the app functions.

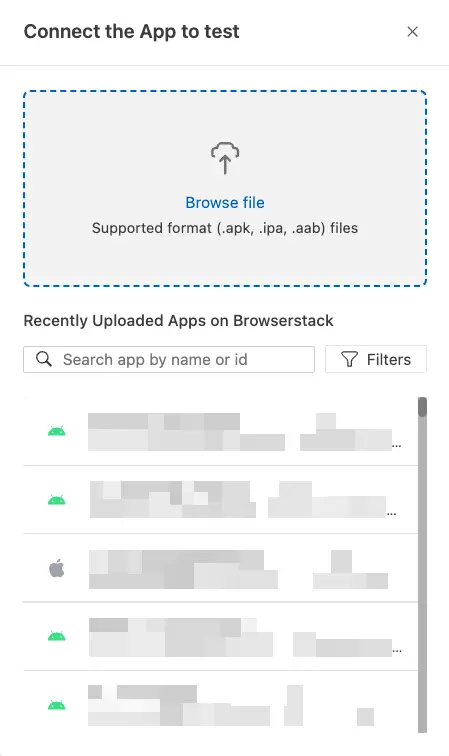

Follow these steps to generate test cases by exploring the app:

-

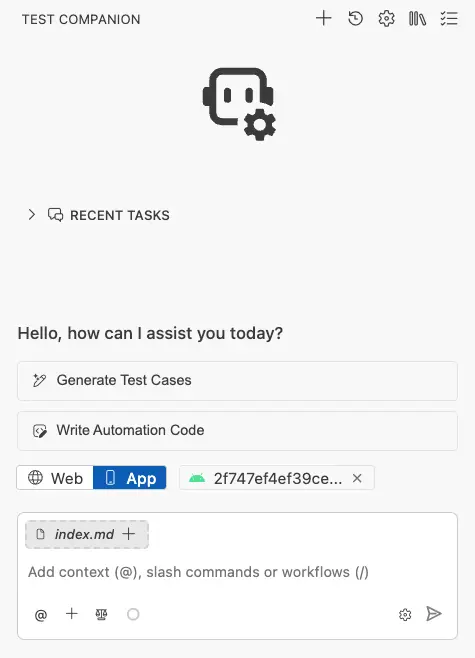

Ensure you are in App mode.

- Click Select App dropdown to choose your app, or confirm that your app is already connected.

-

In Connect the App to test panel, select the app you want to test from the list of uploaded apps or upload a new one.

- In the chat box, provide the AI with context. You can combine multiple methods:

- Attach a file: Click the + (add files & images) icon to attach your main PRD, spec, or image file.

- Paste text: Provide a clear prompt or directly paste your requirements, Jira links, Confluence links, or user story into the chat asking the AI agent to explore the app and generate test cases for a specific feature, flow, or set of screens.

- Test Companion starts a device session, opens your app, and navigates through the relevant screens.

The AI generates test cases based on the elements, interactions, and flows it discovers.

Example prompt:

Explore the app and generate test cases for the entire onboarding flow — from the first screen a new user sees to the point where they reach the home screen. Include tests for:

- Each step of the onboarding wizard.

- Skipping optional steps.

- Going back to a previous step.

- What happens if the user closes the app mid-onboarding and reopens it.

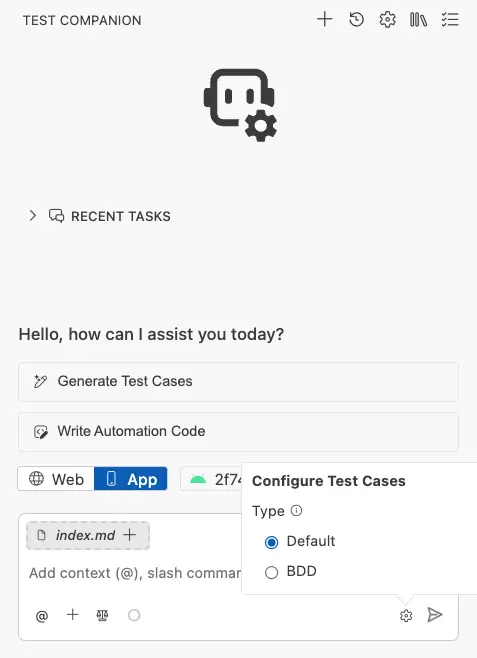

Configure test case format

Before the AI agent begins generating, from Configure Test Cases panel at the bottom of the screen, you can select the format for the generated test cases:

| Type | Description |

|---|---|

| Default | The standard test case format includes a title, numbered steps, and expected results. This format is suitable for most teams. |

| BDD | The Behaviour-Driven Development format uses Given/When/Then syntax. It is suitable for teams that utilize BDD frameworks like Cucumber, SpecFlow, or Behave. |

Choose the type that best aligns with your team’s workflow. This setting will apply to the current generation task.

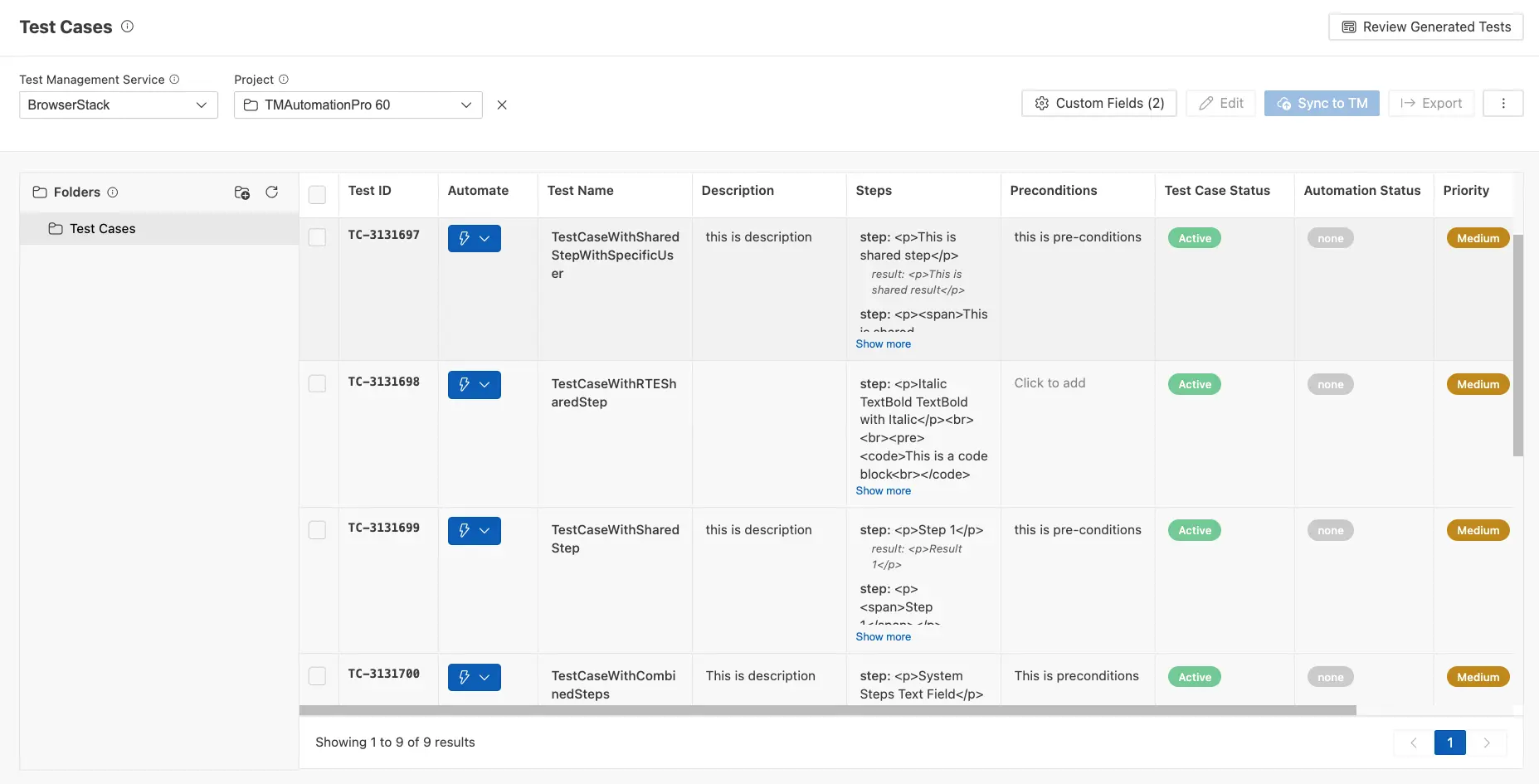

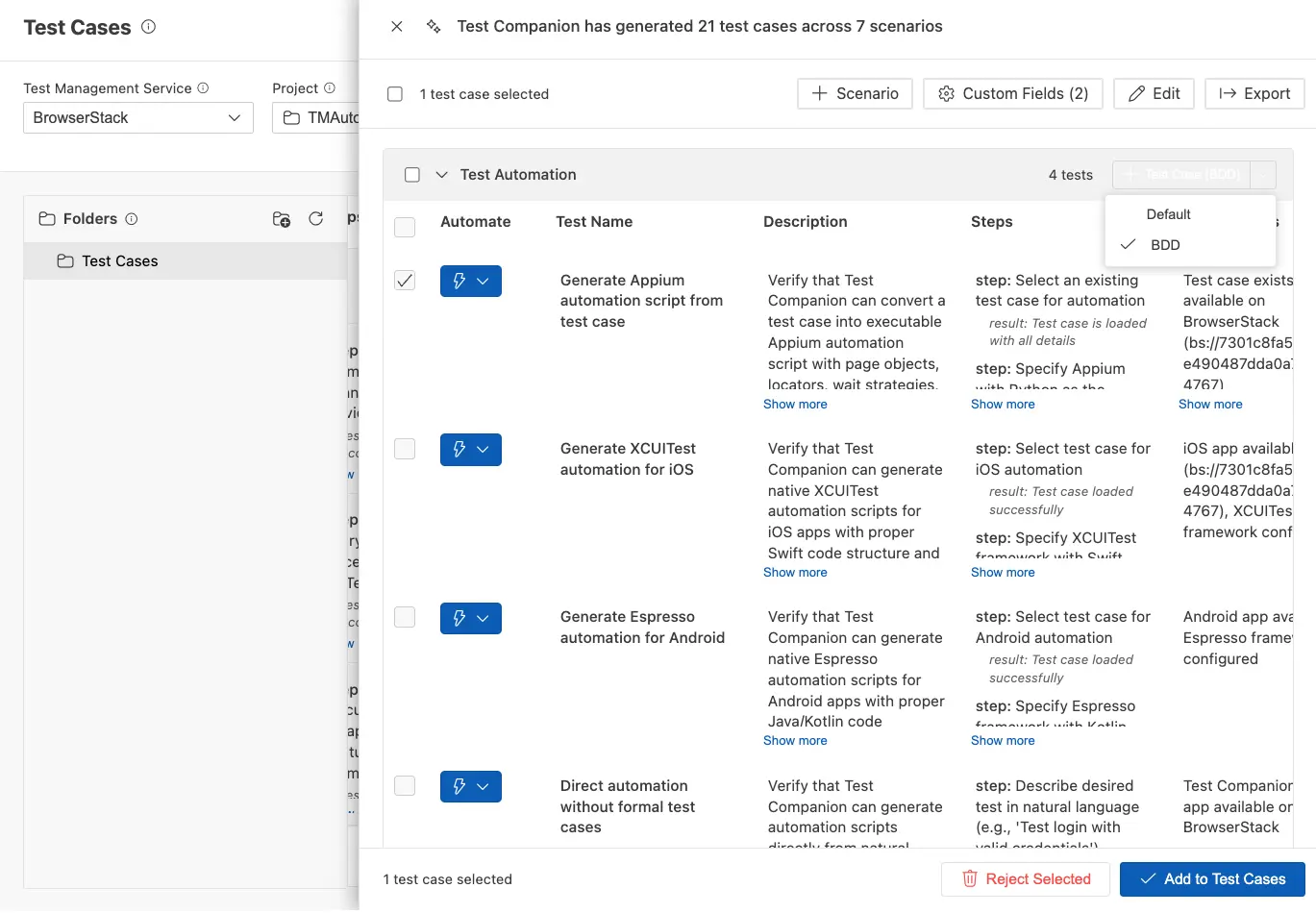

The test cases panel

After the generation completes, the Test Cases panel opens on the right side of your IDE. Use this panel to review, manage, and execute your test cases. Every field in this UI is editable. You can modify titles, steps, and expected results before committing them to your suite.

At the top of the panel, a summary banner provides information on how many test cases were generated and the number of scenarios involved (for example: Test Companion has generated 24 test cases across 8 scenarios).

How test cases are organized

Test cases are grouped by scenarios, which are logical categories created by the AI based on your requirements or its exploration findings. For instance, a generation task for a messaging feature might generate scenarios such as:

- Test Case Generation: covering the three generation methods themselves

- Test Automation: covering script generation across various frameworks

- Test Execution: covering the execution of tests in different environments

- Test Maintenance: covering locator fixes, timing issues, and platform differences

- Accessibility Testing: covering WCAG audits and platform-specific checks

- Workflow Integration: covering end-to-end QA workflows

- Error Handling: covering edge cases and failure scenarios

You can expand or collapse each scenario to focus on the test cases that are most relevant to you.

Components of each test case

Each test case displayed in the panel includes the following details:

| Column | Description |

|---|---|

| Automate | A button that enables you to send the test case directly to the automation workflow, where Test Companion generates an executable script for it. |

| Test Name | A brief title that clearly describes what the test is verifying, such as “Generate test cases from PRD requirements” or “Fix timing issues on slower devices.” |

| Description | A comprehensive explanation of what the test covers and what it validates. Click Show more to expand and view the full description. |

| Steps | A numbered sequence of actions to be performed, which may include mobile-specific interactions (e.g., tap, swipe, type, grant permission). Each step includes an action followed by its expected result displayed in smaller text underneath. Click Show more to view all steps. |

| Preconditions / Background | Conditions that must be met prior to starting the test. In Default format, this column is labeled “Preconditions” (e.g., “Test Companion active with access to AI test generation capabilities”). In BDD format, it is labeled “Background” and contains the Given/When/Then setup context. |

When test cases are expanded, you can also see:

- Test Case Status: Indicates whether the test case has been reviewed, shown with green or red indicators.

- Automation Status: Displays whether automation code has been generated for this test case (e.g., Not Automated).

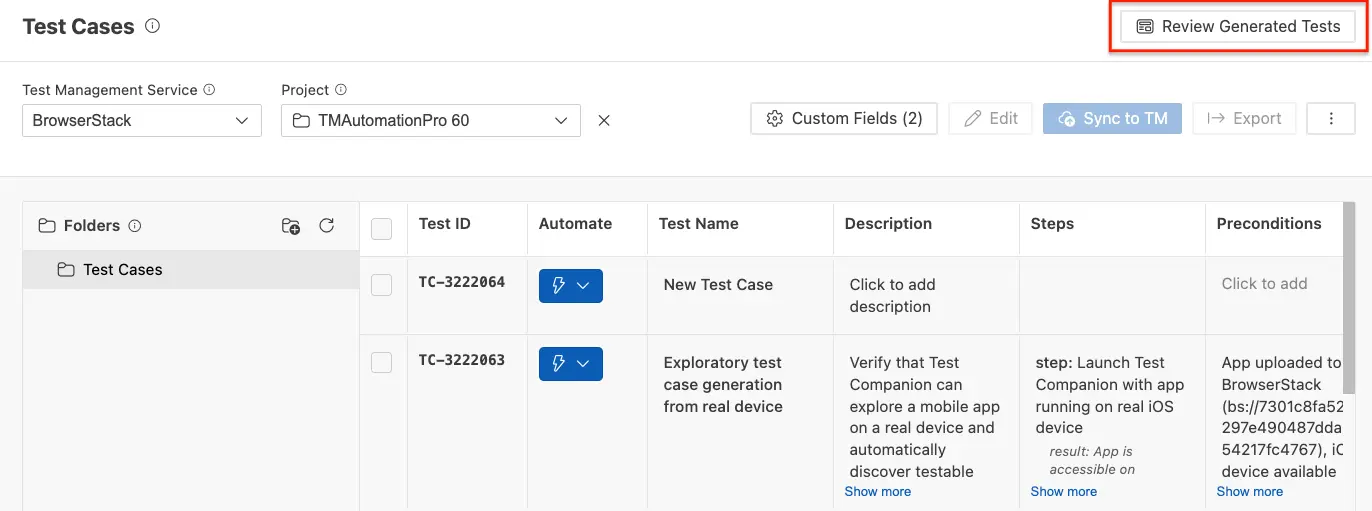

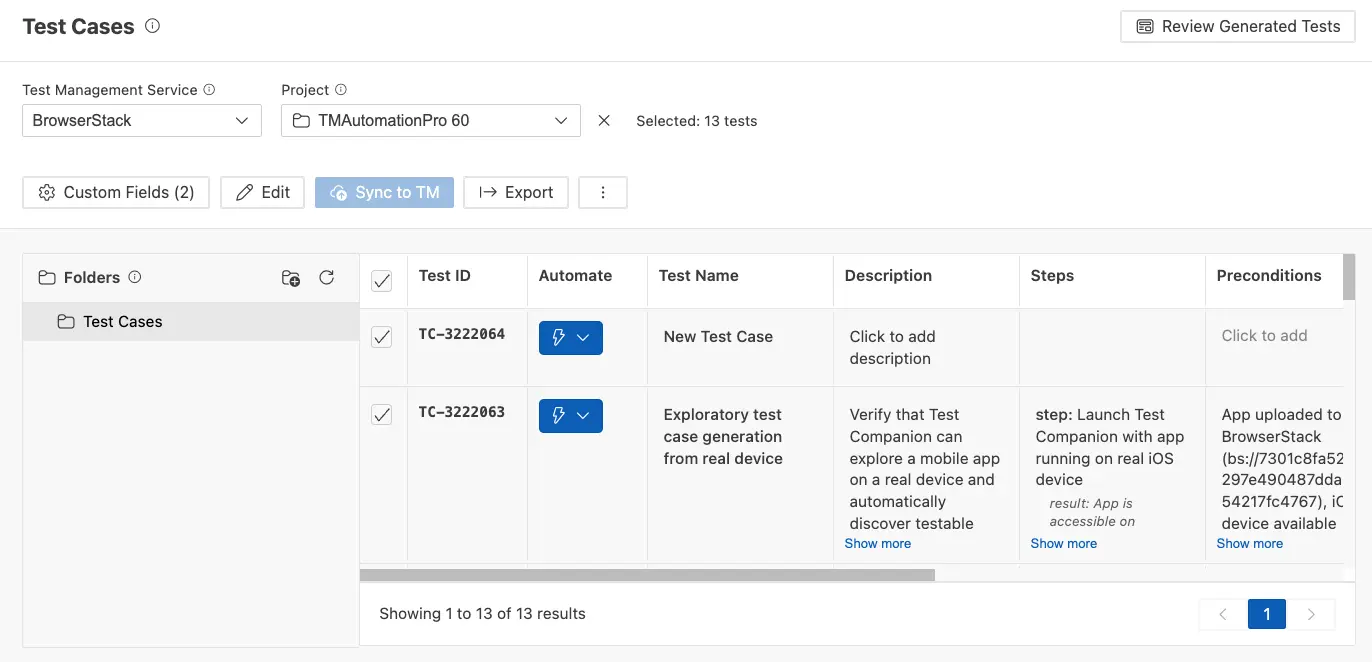

Review and manage test cases

Select test cases

You can select individual test cases by clicking the checkbox next to them, or you can use the scenario-level checkbox to select all test cases within a scenario. The selection counter at the top updates to show how many test cases are selected.

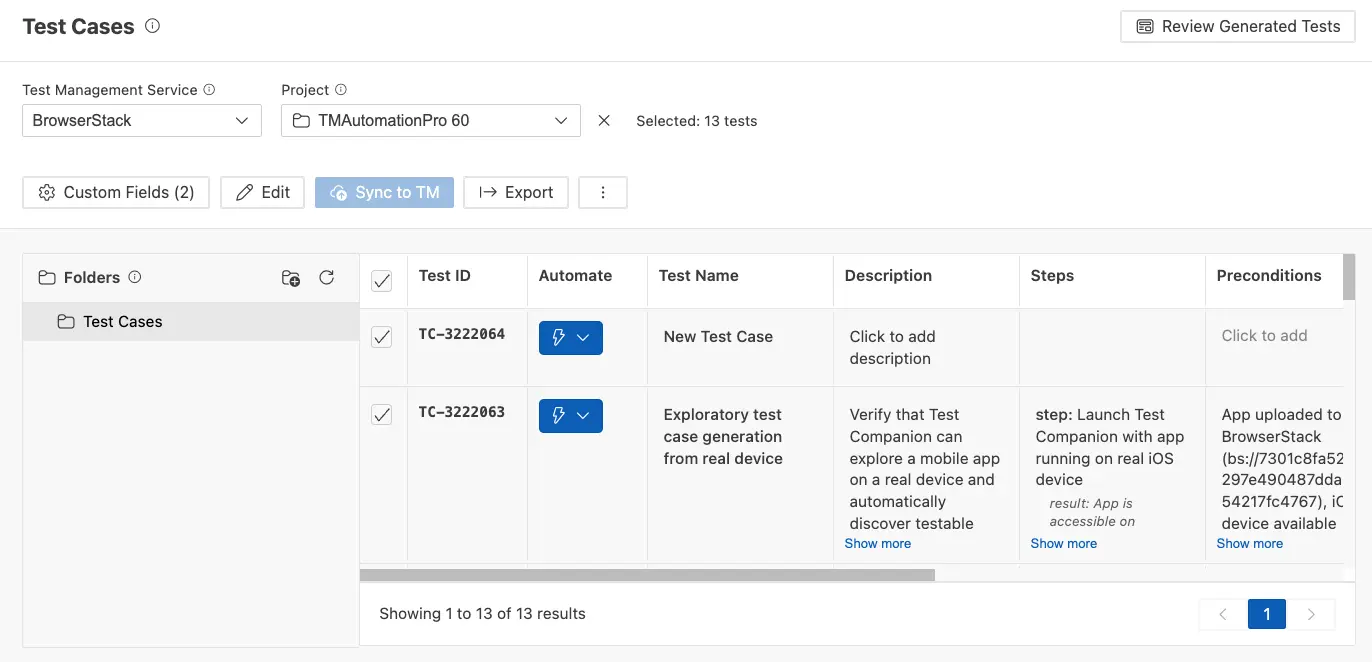

The Review Generated Tests button in the top-right corner allows you to switch between the review view (where you see the generated test cases grouped by scenario) and the synced view (where you see the test cases as they appear in Test Management with their IDs and folder structure).

Accept or reject test cases

After reviewing, you have two options for the selected test cases:

- Add to Test Cases: Click the Add to Test Cases button to accept the selected test cases and add them to your test case collection. If you have folders set up, the test cases are added to the active folder.

- Reject Selected: Click Reject Selected to remove any test cases you do not wish to include. This feature is helpful for filtering out low-value or duplicate test cases.

Edit test cases

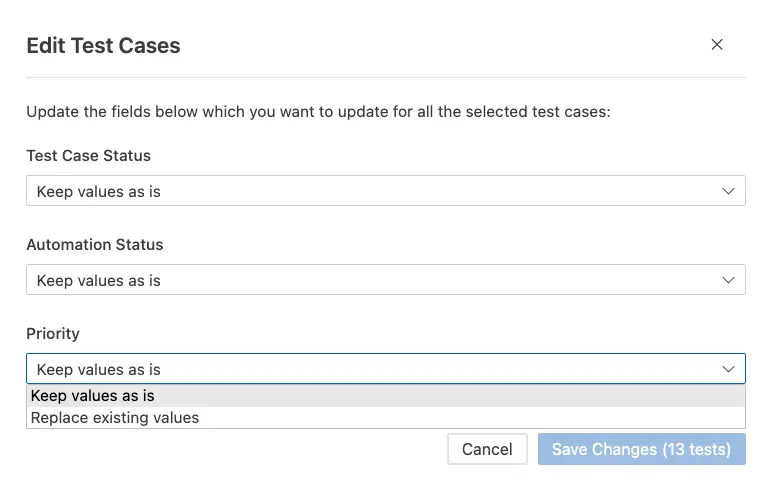

To edit test cases in bulk, follow these steps:

-

Select the test cases you want to modify and click the Edit button in the toolbar.

This opens the Edit Test Cases dialog.

This opens the Edit Test Cases dialog. -

Update properties across all selected test cases at once:

- Test Case Status: Set or change the review status.

- Automation Status: Mark test cases as automated or not automated.

- Priority: Assign a priority level.

-

Click Save Changes (N tests) to apply your changes to all selected test cases.

You can also edit individual test cases inline by clicking directly on the test case name, description, steps, or preconditions fields in the panel.

Add a new scenario

Click the + Scenario button in the toolbar at the top of the Test Cases panel to create a new scenario grouping. You can then add test cases to it manually or move existing test cases into it.

Scroll to the bottom of the panel where empty scenario sections (New Scenario 1, New Scenario 2) is created and are available. Each new scenario includes its own New Test Case rows and a + Test Case (BDD) button for adding BDD-format test cases.

Add new test cases manually

You can switch the format of individual scenarios between Default and BDD using the format badge that appears in the dropdown at the top-right corner of each scenario section. Click the badge to toggle between formats.

At the bottom of each scenario, you will see a New Test Case row with placeholder text (Click to add description and Click to add BDD scenario). Click on these fields to manually create a test case that the AI may have missed.

Custom fields

Click the Custom Fields button in the toolbar to open the Custom Fields dialog. Here, you can add metadata to your test cases as name/value pairs.

Example:

-

version:1.0.1 -

Configuration:Windows, Firefox

You can add as many custom fields as needed. These fields will be included when you sync test cases to your test management system and are useful for tracking build versions, environment configurations, priority levels, and any other metadata relevant to your team.

Export test cases

Click the Export button in the toolbar to export the test cases. This feature is useful for sharing with stakeholders who do not have access to Test Companion or your BrowserStack Test Management.

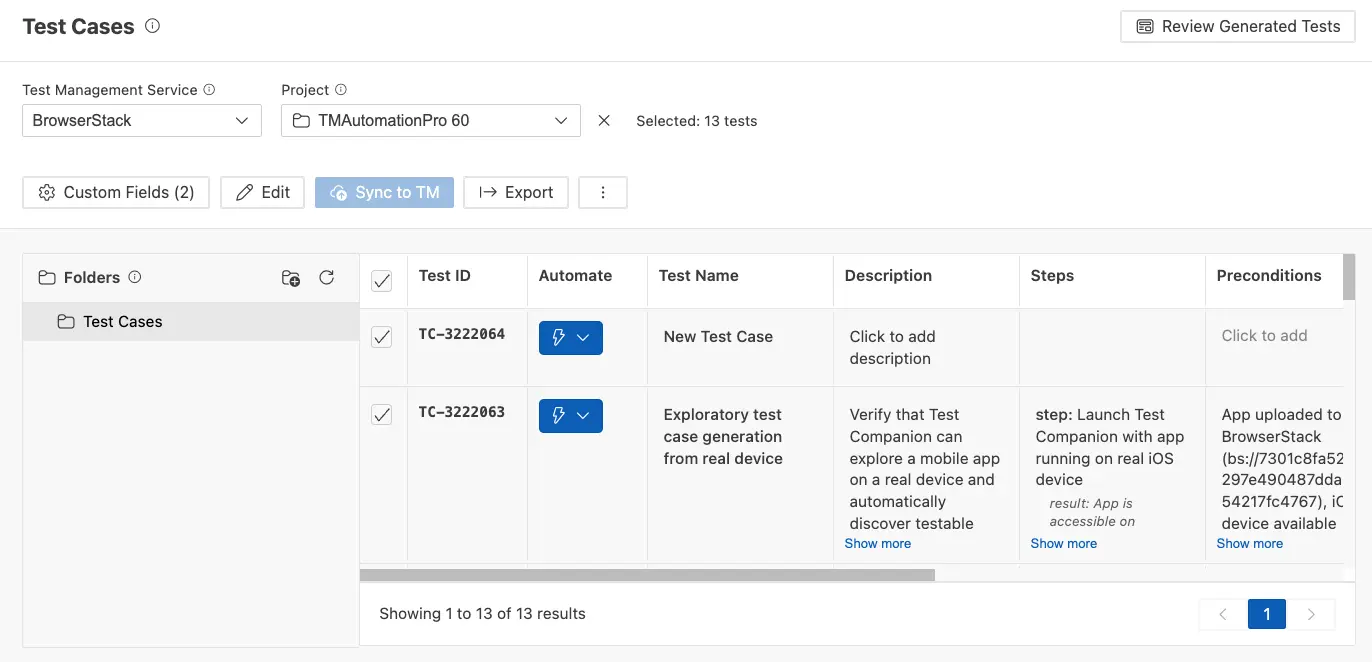

Sync to Test Management

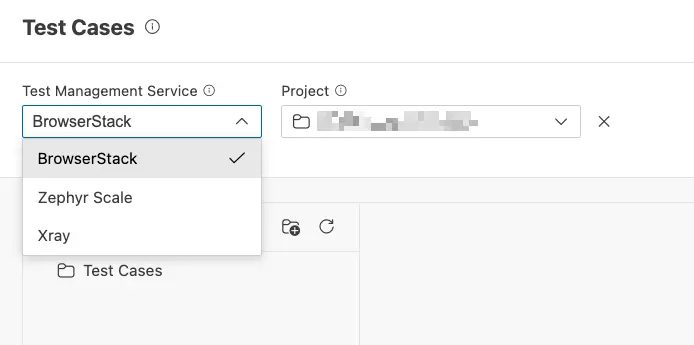

If you use BrowserStack Test Management, you can sync your generated test cases directly from the Test Cases panel.

-

In the Test Cases panel toolbar, select BrowserStack from the Test Management Service dropdown.

- Choose the Project or create one from the second dropdown.

- Organize your test cases into Folders using the folder panel on the left side.

-

Click the Sync to TM button (blue) to push the test cases to your BrowserStack Test Management.

After syncing, each test case will receive a Test ID linking it to the corresponding entry in your test management system. The synced view shows all your test cases with their Test IDs in a table format with pagination.

Best practices for enhanced test case generation

-

Be specific about the scope. Instead of saying, “Generate test cases for my app,” narrow it down to specifics like, “Generate test cases for the payment screen,” or “Generate test cases for the push notification opt-in flow.” Focused requests yield more detailed and relevant results.

-

Provide context about your users. Describe who uses your app and how. For example, “Our primary users are delivery drivers who use the app one-handed while standing. Tests should consider larger tap targets and minimal typing.” This helps ensure that the generated scenarios mirror real-world usage.

-

Combine context methods for the best results. Attach a PRD (Product Requirements Document) file, add your project folder with @ for code context, and describe what you need in plain language—all in the same prompt. The more context provided, the more accurate and relevant the test cases will be.

-

Use BDD format when your team works with BDD frameworks. If your automation framework expects Given/When/Then scenarios (like in Cucumber, Behave, or SpecFlow), select BDD in the Configure Test Cases panel. This will ensure that the generated test cases are in a format your framework can directly consume.

-

Specify the platform when relevant. If you only need coverage for iOS, make that clear. If you require tests for both platforms, ask the AI to highlight any platform differences.

-

Mention specific edge cases of concern. The AI will automatically generate edge cases, but if your team is aware of specific pain points (for example, “the app crashes when switching between Hebrew and English languages”), mentioning these explicitly will ensure they are addressed.

-

Review and reject before syncing. The AI may generate more test cases than you actually need. Use the Reject Selected feature to remove low-value or duplicate test cases before syncing to your test management system. This will keep your test suite focused and manageable.

-

Use custom fields for traceability. Add custom fields like build version, sprint number, or feature flag name before syncing. This metadata carries over to your test management system, making it easy to filter and report on test cases later.

We're sorry to hear that. Please share your feedback so we can do better

Contact our Support team for immediate help while we work on improving our docs.

We're continuously improving our docs. We'd love to know what you liked

We're sorry to hear that. Please share your feedback so we can do better

Contact our Support team for immediate help while we work on improving our docs.

We're continuously improving our docs. We'd love to know what you liked

Thank you for your valuable feedback!