Selenium with Lettuce

Your guide to run Selenium Webdriver tests with Lettuce on BrowserStack.

BrowserStack gives you instant access to our Selenium Grid of 3000+ real devices and desktop browsers. Running your Selenium tests with Lettuce on BrowserStack is simple. This guide will help you in:

- Running your first test

- Integrating your tests with BrowserStack

- Mark tests as passed or failed

- Debugging your app

Prerequisites

- You need to have BrowserStack Username and Access key, which you can find in your account settings. If you have not created an account yet, you can sign up for a Free Trial or purchase a plan.

- Before you can start running your Selenium tests with Lettuce, ensure you have the Lettuce libraries installed:

pip install lettuce

Running your first test

- Edit or add capabilities in the W3C format using our W3C capability generator.

- Add the

seleniumVersioncapability in your test script and set the value to4.0.0.

To run Selenium tests with Lettuce on BrowserSatck Automate, follow the below steps:

- Clone the lettuce-browserstack repo on GitHub with BrowserStack’s sample test, using the following command:

git clone https://github.com/browserstack/lettuce-browserstack.git cd lettuce-browserstack - Install the dependencies using the following command:

pip install -r requirements.txt - Update

parallel.jsonfiles within thelettuce-browserstack/config/directory with your BrowserStack credentials as shown below:

parallel.json{ "server": "hub.browserstack.com", "user": "YOUR_USERNAME", "key": "YOUR_ACCESS_KEY", "capabilities": { "build": "lettuce-browserstack", "name": "parallel_test", "browserstack.debug": true }, "environments": [{ "browser": "chrome" },{ "browser": "firefox" },{ "browser": "safari" },{ "browser": "internet explorer" }] } - Now, execute your first test on BrowserStack using the following command:

paver run parallel - View your test results on the BrowserStack Automate dashboard.

Details of your first test

When you run the paver run parallel command, the test is executed on the browsers specified in the parallel.json file.

The test searches for “BrowserStack” on Google, and checks if the title of the resulting page is “BrowserStack - Google Search”.

The steps.py file defines the actions to be performed based on the steps in sample test’s feature file.

# Google Steps

@step('field with name "(.*?)" is given "(.*?)"')

def fill_in_textfield_by_class(step, field_name, value):

with AssertContextManager(step):

elem = world.browser.find_element_by_name(field_name)

elem.send_keys(value)

elem.submit()

time.sleep(5)

@step(u'Then title becomes "([^"]*)"')

def then_title_becomes(step, result):

title = world.browser.title

assert_equals(title, result)

The following single.feature file is the actual sample test file where the test scenario is defined in BDD fashion.

# Google Feature

Feature: Google Search Functionality

Scenario: can find search results

When visit url "https://www.google.com/ncr"

When field with name "q" is given "BrowserStack"

Then title becomes "BrowserStack - Google Search"

Integrating your tests with BrowserStack

Integration of Lettuce with BrowserStack is made possible by use of following module:

from lettuce import before, after, world

from selenium import webdriver

from browserstack.local import Local

import lettuce_webdriver.webdriver

import os, json

CONFIG_FILE = os.environ['CONFIG_FILE'] if 'CONFIG_FILE' in os.environ else 'config/single.json'

TASK_ID = int(os.environ['TASK_ID']) if 'TASK_ID' in os.environ else 0

with open(CONFIG_FILE) as data_file:

CONFIG = json.load(data_file)

bs_local = None

BROWSERSTACK_USERNAME = os.environ['BROWSERSTACK_USERNAME'] if 'BROWSERSTACK_USERNAME' in os.environ else CONFIG['user']

BROWSERSTACK_ACCESS_KEY = os.environ['BROWSERSTACK_ACCESS_KEY'] if 'BROWSERSTACK_ACCESS_KEY' in os.environ else CONFIG['key']

def start_local():

"""Code to start browserstack local before start of test."""

global bs_local

bs_local = Local()

bs_local_args = { "key": BROWSERSTACK_ACCESS_KEY, "forcelocal": "true" }

bs_local.start(**bs_local_args)

def stop_local():

"""Code to stop browserstack local after end of test."""

global bs_local

if bs_local is not None:

bs_local.stop()

@before.each_feature

def setup_browser(feature):

desired_capabilities = CONFIG['environments'][TASK_ID]

for key in CONFIG["capabilities"]:

if key not in desired_capabilities:

desired_capabilities[key] = CONFIG["capabilities"][key]

if 'BROWSERSTACK_APP_ID' in os.environ:

desired_capabilities['app'] = os.environ['BROWSERSTACK_APP_ID']

if "browserstack.local" in desired_capabilities and desired_capabilities["browserstack.local"]:

start_local()

world.browser = webdriver.Remote(

desired_capabilities=desired_capabilities,

command_executor="https://%s:%s@%s/wd/hub" % (BROWSERSTACK_USERNAME, BROWSERSTACK_ACCESS_KEY, CONFIG['server'])

)

@after.each_feature

def cleanup_browser(feature):

world.browser.quit()

stop_local()

Mark tests as passed or failed

BrowserStack provides a comprehensive REST API to access and update information about your tests. Shown below is a sample code snippet which allows you to mark your tests as passed or failed based on the assertions in your Lettuce test cases.

import requests

requests.put('https://YOUR_USERNAME:YOUR_ACCESS_KEY@api.browserstack.com/automate/sessions/<session-id>.json', data={"status": "passed", "reason": ""})

You can find the full reference to our REST API.

Debugging your app

BrowserStack provides a range of debugging tools to help you quickly identify and fix bugs you discover through your automated tests.

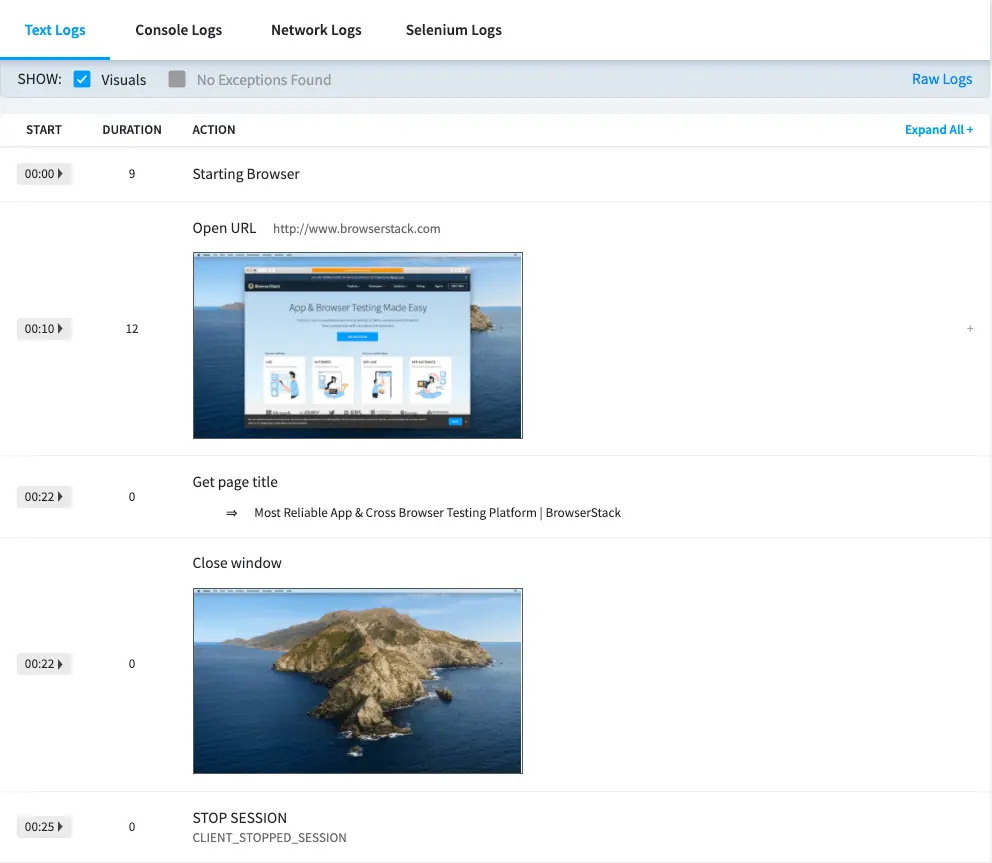

Text logs

Text Logs are a comprehensive record of your test. They are used to identify all the steps executed in the test and troubleshoot errors for the failed step. Text Logs are accessible from the Automate dashboard or via our REST API.

Visual logs

Visual Logs automatically capture the screenshots generated at every Selenium command run through your JUnit tests. Visual Logs help with debugging the exact step and the page where failure occurred. They also help identify any layout or design related issues with your web pages on different browsers.

Visual Logs are disabled by default. In order to enable Visual Logs you will need to set browserstack.debug capability to true.

capabilities = {

"browserstack.debug": "true"

}

Sample Visual Logs from Automate Dashboard:

Video recording

Every test run on the BrowserStack Selenium grid is recorded exactly as it is executed on our remote machine. This feature is particularly helpful whenever a browser test fails. You can access videos from Automate Dashboard for each session. You can also download the videos from the Dashboard or retrieve a link to download the video using our REST API.

browserstack.video capability to false.

capabilities = {

"browserstack.video": "false"

}

In addition to these logs BrowserStack also provides Raw Logs, Network Logs, Console Logs, Selenium Logs, Appium Logs and Interactive session. You can find the complete details to enable all the debugging options.

Next steps

Once you have successfully run your first test on BrowserStack, you might want to do one of the following:

- Run multiple tests in parallel to speed up the build execution

- Migrate existing tests to BrowserStack

- Test on private websites that are hosted on your internal networks

- Select browsers and devices where you want to test

- Set up your CI/CD: Jenkins, Bamboo, TeamCity, Azure, CircleCI, BitBucket, TravisCI, GitHub Actions

We're sorry to hear that. Please share your feedback so we can do better

Contact our Support team for immediate help while we work on improving our docs.

We're continuously improving our docs. We'd love to know what you liked

We're sorry to hear that. Please share your feedback so we can do better

Contact our Support team for immediate help while we work on improving our docs.

We're continuously improving our docs. We'd love to know what you liked

Thank you for your valuable feedback!