Test Deduplication Agent

Automatically find and resolve duplicate test cases in your project using AI-powered exact and semantic matching.

Maintaining a lean test suite is often a manual, thankless task. BrowserStack Test Management simplifies this with a proprietary AI-powered de-duplication engine. Instead of just looking for exact text matches, the engine performs a deep semantic analysis of every test case in your project to identify redundancies that human eyes might miss.

Limited early access:

The AI-powered duplicate test-case identification agent is in Beta and is rolling out in stages. Currently, only a small set of user groups can access it. It will be enabled for your group ID as the rollout continues.

What problem does the de-duplication agent solve?

As projects grow through imports, team changes, and organic test creation, duplicate test cases accumulate. One tester writes Verify login flow. Another creates User sign-in check. Both test the same scenario, but neither knows the other exists.

These duplicates cause:

- Inflated test counts that misrepresent coverage

- Distorted reporting accuracy

- Multiple testers maintaining the same scenarios

- Longer review cycles due to noise

The Test Deduplication Agent scans your project using AI. It analyzes titles, descriptions, steps, and expected results to find overlapping test cases, then groups them for your review.

Availability

The Test Deduplication Agent is available on the Team Ultimate plan for projects with up to 22,000 test cases.

| Capability | Ultimate Plan | EFT-Ultimate |

|---|---|---|

| Max test cases per project | 22,000 | 5,000 |

| Deduplication runs | Unlimited | Up to 3 per group. |

| Demo project access | Yes | Yes |

-

Team and Team Pro plans can explore the feature through a demo project pre-loaded with sample duplicates. Click Review Duplicates on the Projects listing page to try it. All actions in the demo (merge, archive, discard) work like a live project.

-

EFT-Ultimate users get up to 3 deduplication runs per group. Runs are shared across Quick Import and CSV/Feature file imports. If the plan expires and is later re-enabled, the run count resets to 3.

Projects exceeding 22,000 test cases are not eligible for automatic scanning. To use this feature, reach out to the support team or reduce the number of test cases in your project.

How the test deduplication agent works

The agent runs automatically. No configuration required. It triggers in three scenarios:

- Monthly maintenance: For Ultimate plan users, the agent runs a full scan once a month and delivers results incrementally.

- Migration: When you import test cases from another platform, the agent scans them to ensure the project starts clean.

- CSV imports: When you upload a CSV file to an existing project, the agent scans the new data against existing test cases.

Scans run on projects with fewer than 22,000 test cases.

The de-duplication agent does not just flag look-alikes. It understands the intent and logic of your test steps. The Agent follows a simple three-step process to clean up your project. You do not have to wait for the entire scan to finish to start seeing results.

- Intelligent scanning: The Agent performs a deep dive into your project, comparing every test case against all others. It looks for Exact Matches (identical text) and Semantic Matches (tests that use different words but describe the same action).

- Real-time results: As the Agent works through your test cases, findings will appear on the Duplicates page immediately. You will see a Scan in progress status while it works, but you can start reviewing results the moment they pop up.

- Take Action For every potential duplicate the AI finds, you decide the next step:

- Merge: Combine them into one master test case.

- Archive: Hide the redundant version while keeping its history.

- Dismiss: Tell the AI that these tests are actually different, which helps it learn for next time.

Demo

Identify duplicate test cases

BrowserStack AI automatically scans your test cases for duplicates. You can review the suggestions at any time. Follow these steps to identify duplicate test cases.

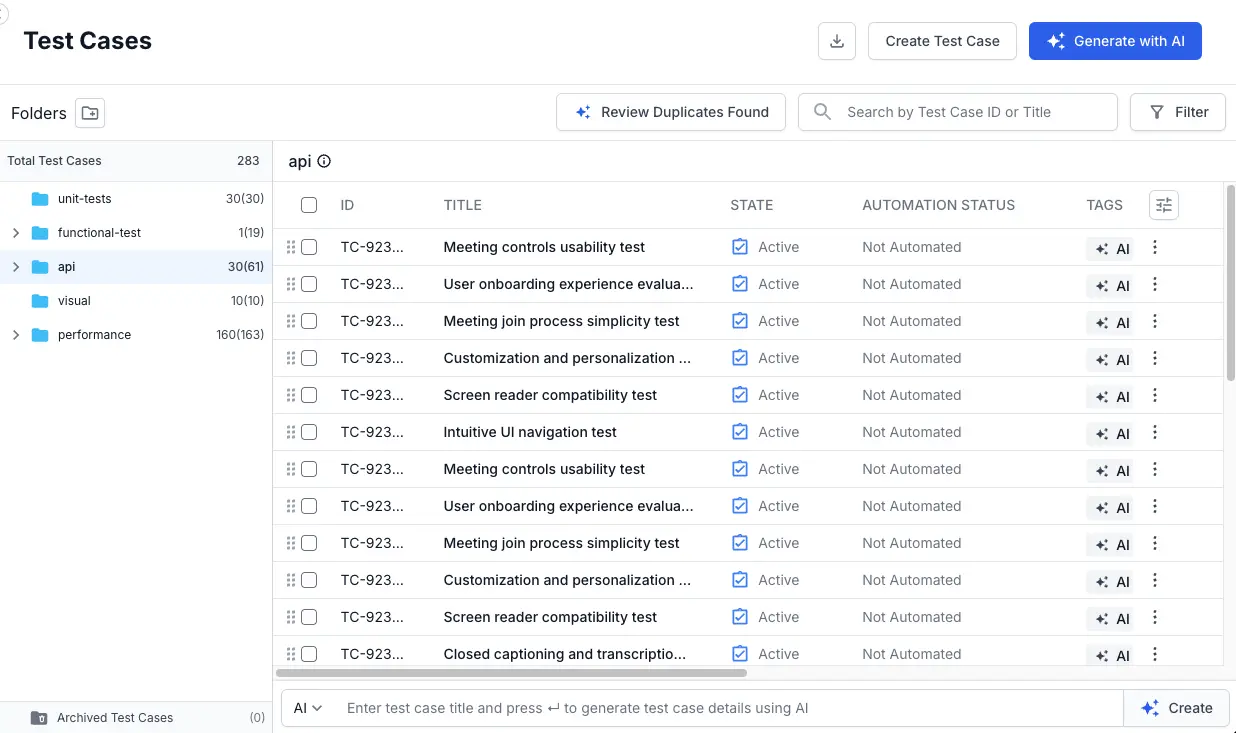

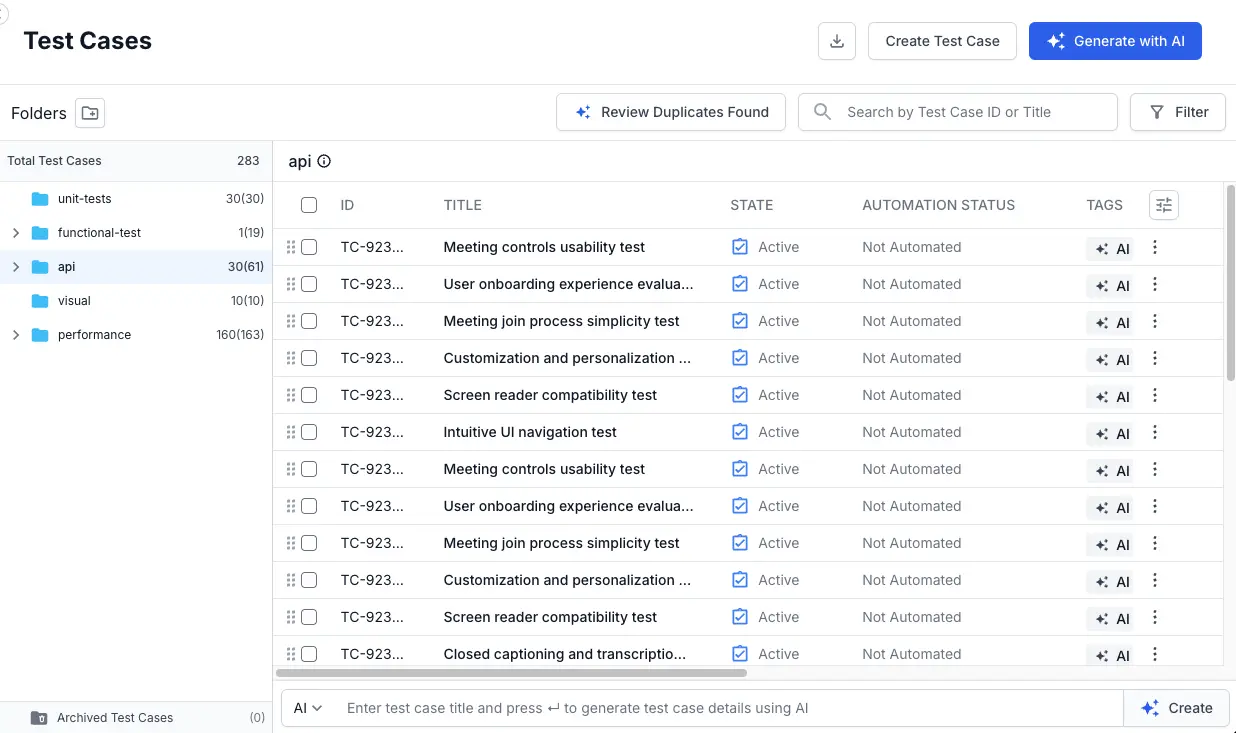

- Navigate to the test cases listing view from the left navigation bar.

-

Select the desired folder and click the Review Duplicates Found button located above the test case list.

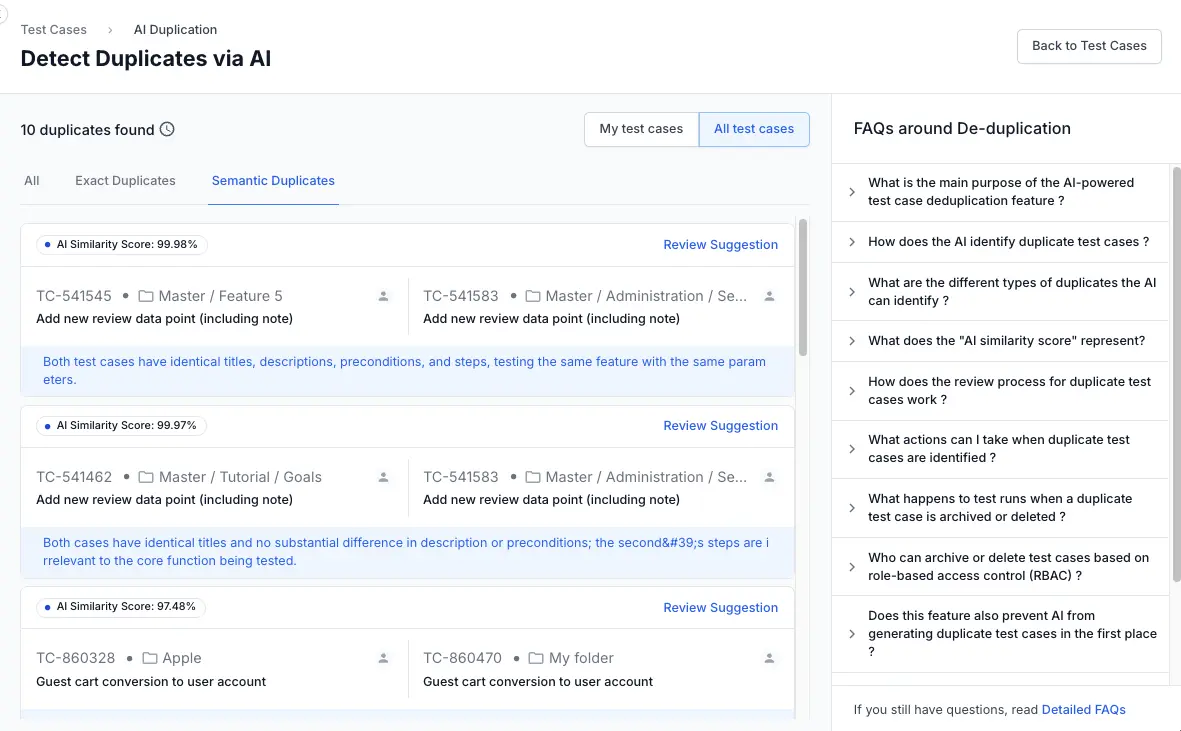

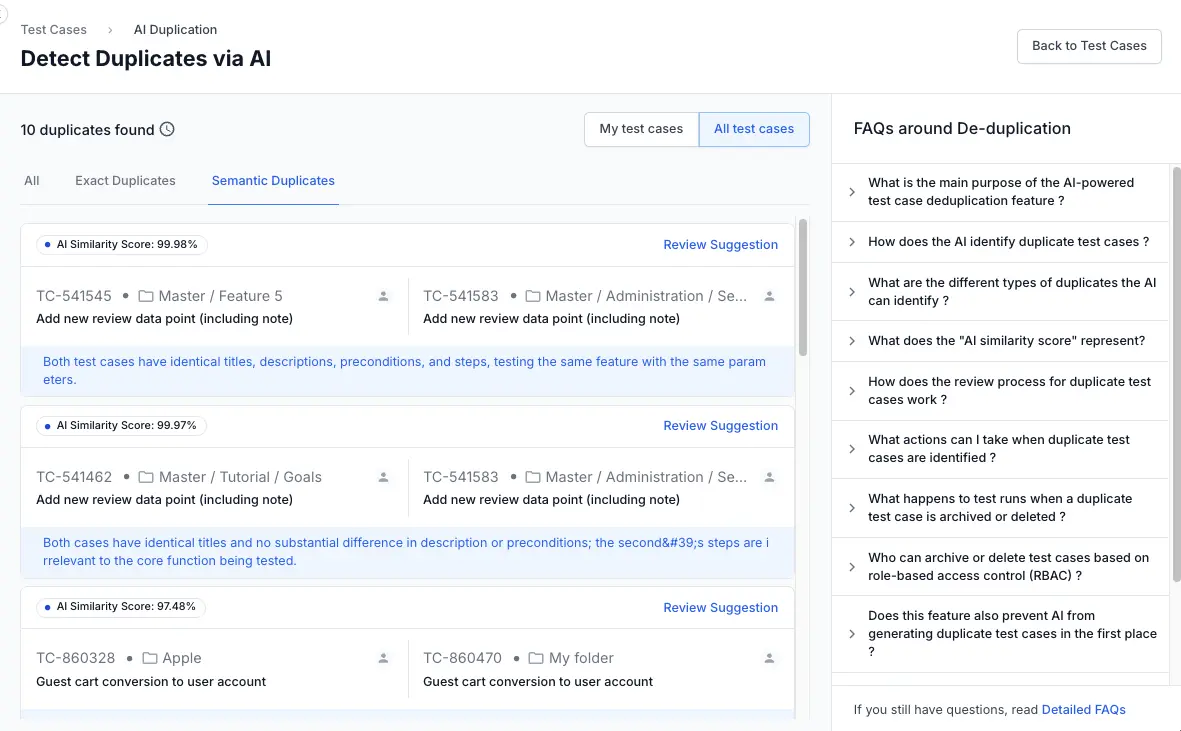

The system will display a list of potential duplicates, categorized as Exact Duplicates and Semantic Duplicates. Each pair includes an AI similarity score.

The system will display a list of potential duplicates, categorized as Exact Duplicates and Semantic Duplicates. Each pair includes an AI similarity score. - Select one of the tabs to view test cases categorized as:

- All: View all the test cases.

- Exact Duplicates: Test cases that are completely identical.

- Semantic Duplicates: Test cases where certain aspects are the same.

- My test cases: Shows test cases for which you are the owner.

- All test cases: Shows all test cases in the folder.

- Click Review Suggestion next to a pair to see a detailed comparison.

You have now successfully identified the duplicate test cases suggested by the AI. The next step is to decide what action to take.

Exact vs semantic duplicates

The agent categorizes each flagged pair into one of two types based on how the test cases overlap.

- Exact duplicates share identical titles, steps, and expected results. These are created when team members write the same test independently, or when imports bring in cases that already exist. Exact duplicates are safe to merge or archive with confidence.

| Field | Test Case 1 | Test Case 2 (Exact) |

|---|---|---|

| Title | User login | User login |

| Step | Enter username and password | Enter username and password |

| Expected result | User logs in successfully | User logs in successfully |

- Semantic duplicates use different wording but test the same functionality. The agent uses natural language understanding to catch these. Simple text matching would miss them. Semantic duplicates require more judgment. Review the AI explanation and side-by-side comparison carefully before deciding.

| Field | Test Case 1 | Test Case 2 (Semantic) |

|---|---|---|

| Title | User can reset password | Password reset functionality works |

| Step | Click Forgot Password, enter email, and submit | Select Forgot Password option, provide email, and click send |

| Expected result | User receives password reset email | User gets an email to reset password |

Find duplicates

After the de-duplication agent completes its scan, you can review the identified duplicates and decide how to handle each one. The agent groups test cases into Exact Duplicates and Semantic Duplicates, giving you full control over which ones to merge, archive, or discard.

The agent surfaces duplicates in three places across the Test Management interface. Each serves a different moment in your workflow.

Review Duplicates button

On the Test Cases page, a button in the toolbar reads Review {X} duplicates. Where X is the total count of flagged pairs in the project. Click it to open the Duplicates Listing page.

This is your primary entry point for a focused cleanup session.

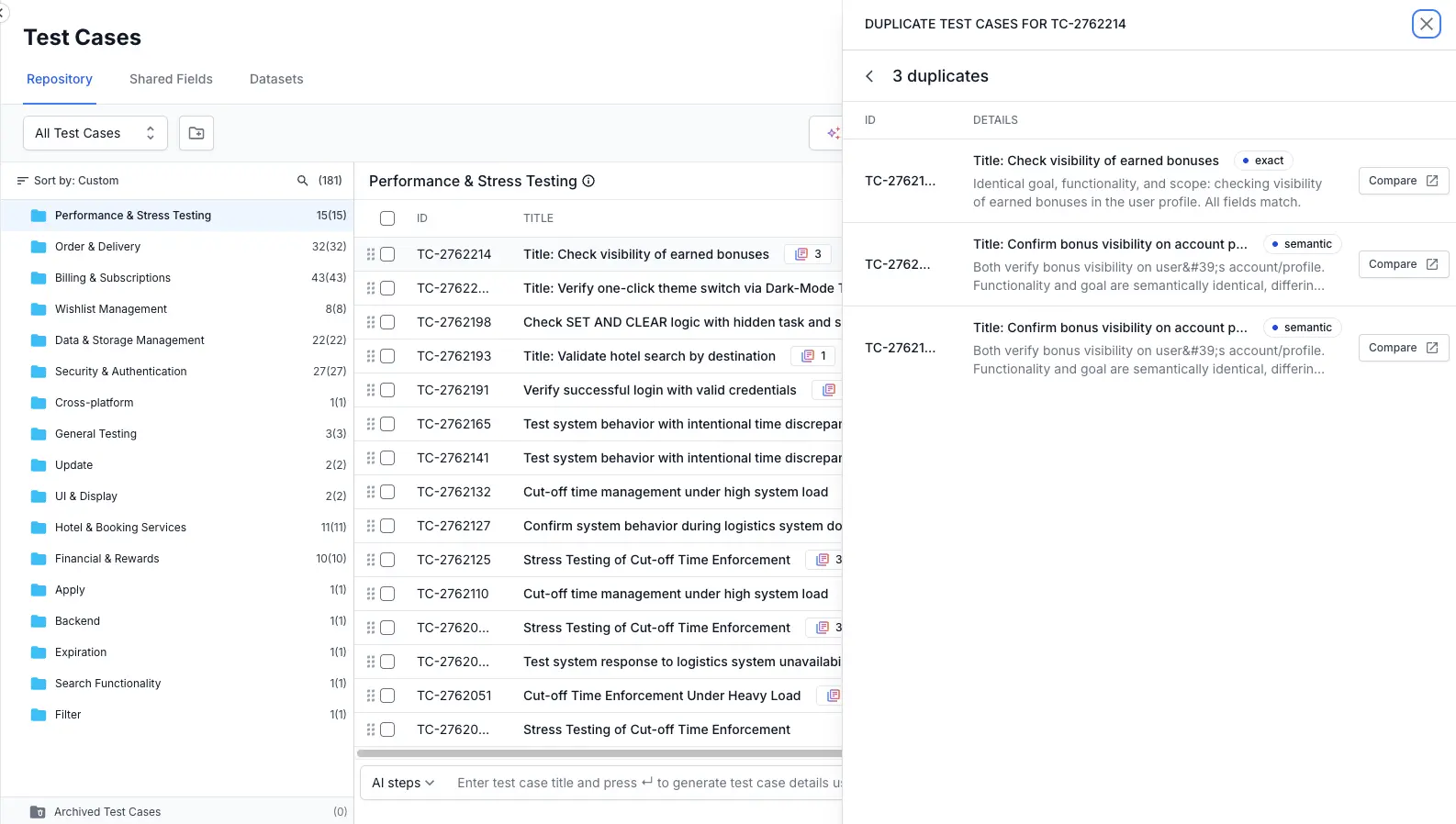

Duplicate count badge

In the test case list, each test case with duplicates shows a badge with the number of matches (for example, 3). Click it to open a side panel listing each match with its type, an AI explanation, and a Compare button.

Use this when you are already working on a specific test case and want to check duplicates without leaving context.

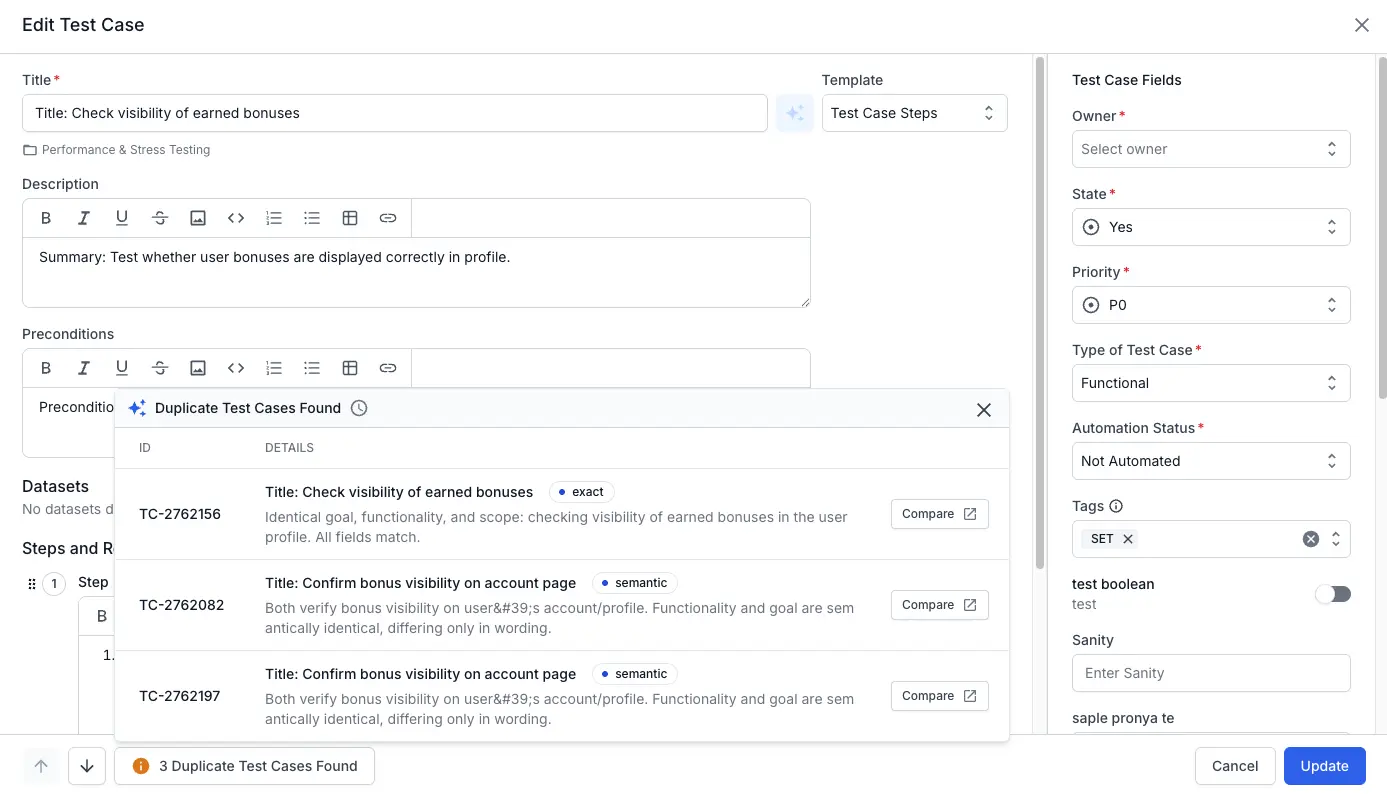

Duplicates indicator in the edit test case window

When editing a test case, a bar at the bottom reads {X} Duplicate Test Cases Found. Click it to expand an inline list with each duplicate, its type, AI explanation, and a Compare link.

This catches your attention during routine editing, so duplicates do not go unnoticed.

Resolve duplicates

After the agent flags duplicates, you review each pair and choose one of three actions. The Duplicates listing page shows all flagged pairs. Click View & Merge on any pair to open the AI Summary page, where you compare both test cases side by side and take action.

| Action | When to use | What happens |

|---|---|---|

| Merge | Both test cases are valid but redundant. You want one canonical version. | The source is archived. The target survives with a reference comment preserving the source’s attributes. Linked test executions on the source are deleted. |

| Archive | One test case is correct and the other adds nothing worth preserving. | The selected test case is archived and permanently removed after 90 days. Linked test executions are deleted. |

| Discard | The agent flagged a pair that is not a real duplicate. | The suggestion is removed. No test cases are affected. |

For step-by-step instructions on comparing pairs, merging, archiving, and discarding, see Review and resolve duplicate test cases.

Deduplication vs. Duplicate ID checks

It is important to know that the Deduplication Agent is different from the standard checks that happen when you upload a file.

- Quick Import Check (Technical): This happens during your upload. It simply looks for matching test case ID to prevent technical errors. It ensures your file doesn’t “break” the import process.

- Deduplication Agent (Intelligent): This happens after your tests are imported in the system. It uses AI to read the actual content - like steps and descriptions - to find tests that are doing the same thing, even if they use completely different words or titles.

The quick import check only looks at Test Case IDs, whereas the Agent looks at intent.

Next steps

- Review and resolve duplicates: Compare flagged pairs side by side, merge them into one, archive redundant copies, or dismiss suggestions.

- Merge duplicate test cases: Learn how to consolidate two test cases into one, preserving necessary information while removing redundancy.

- Archive/discard duplicate test cases: If you prefer to keep one test case and remove the redundant one without combining their content, you can archive or discard it. Learn how to do that in this guide.

We're sorry to hear that. Please share your feedback so we can do better

Contact our Support team for immediate help while we work on improving our docs.

We're continuously improving our docs. We'd love to know what you liked

We're sorry to hear that. Please share your feedback so we can do better

Contact our Support team for immediate help while we work on improving our docs.

We're continuously improving our docs. We'd love to know what you liked

Thank you for your valuable feedback!