Rules

Configure persistent instructions to automate preferences across global and workspace scopes, eliminating repetitive prompts and ensuring consistent testing workflows.

Rules let you provide Test Companion with persistent, system-level guidance that shapes how the AI behaves across every conversation. After a rule is created, it runs silently in the background. You do not need to repeat instructions or remind the AI about your preferences each time you start a new task.

Think of rules as a set of standing instructions. If you find yourself typing the same guidance into the chat repeatedly, that guidance belongs in a rule.

Example rules:

- Always use explicit waits.

- Follow the Page Object pattern.

- Never modify files in the config directory

How rules work

A rule is a plain-text file (.md, .txt, or no extension) that contains instructions written in natural language. When Test Companion starts a conversation, it reads all active rule files and applies their contents as background context. The AI follows the instructions in your rules just as it would follow instructions you type into the chat — except rules are always active, so you never have to repeat them.

Rules do not use a special syntax or markup language. Write them the way you would explain something to a colleague joining your team for the first time.

How to invoke a rule

There are two ways to invoke a custom rule:

-

From the chat: Type

/followed by the rule’s file name in the Test Companion chat (for example,/regression-run.md). - From the UI: Open the Manage Test Companion Rules & Workflows panel, switch to the Rules tab, and click the name of the rule you want to run.

Test Companion reads the file and follows the instructions step by step. Depending on your interaction mode, the AI either works through the steps autonomously (Default mode) or pauses at each step for your confirmation (Guided mode).

Global Rules vs. Workspace Rules

Test Companion provides two types of rules based on their scope:

- Global Rules

- Workspace Rules

The scope defines the projects to which each rule applies.

Global Rules

Global Rules apply to every project you open with Test Companion, regardless of which workspace is active. They are stored in a user-level location on your machine.

Use Global Rules for preferences that you want everywhere:

- Your personal coding style preferences (indentation, naming conventions, comment style).

- General quality standards (always include assertions, never use hard-coded waits).

- Language preferences (always write code comments in English).

- Framework defaults you use across all projects.

Workspace Rules

Workspace Rules apply only to the specific project (workspace) that is currently open. They are stored inside the project directory and can be shared with your team through version control.

Use Workspace Rules for project-specific guidance:

- The testing framework and language for this particular project.

- Project-specific folder structure and naming conventions.

- Integration details (staging URLs, API endpoints, BrowserStack capabilities).

- Files or directories the AI should never modify in this project.

- Team agreements on test design patterns for this codebase.

How these rules interact

When both Global and Workspace rules exist, Test Companion reads and applies both. If they contain conflicting instructions, Workspace rules take precedence over Global rules. This means you can maintain personal global preferences, while workspace-level rules provide project-specific guidance that fills in the gaps.

Create a rule

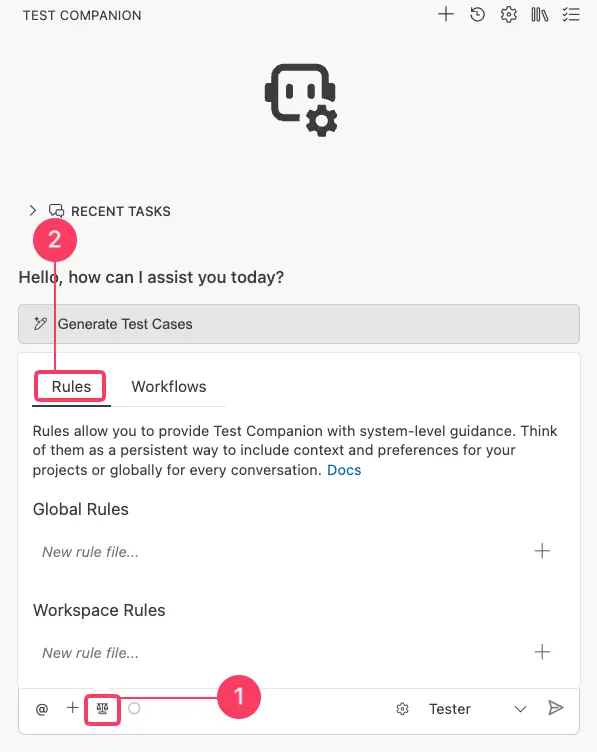

From the extension panel, follow these steps to create a new rule:

-

Click the Manage Test Companion Rules & Rules button (see annotation 1).

- Click the Rules tab (see annotation 2).

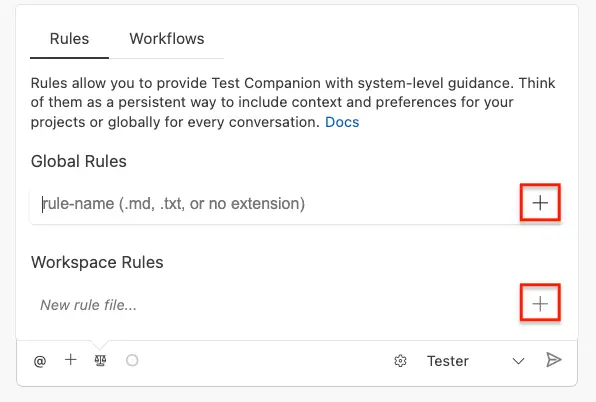

- Under either Global Rules or Workspace Rules, type a file name in the input field.

-

Click the + button next to the input field.

- A new file opens in your editor. Write your rule instructions, then save the file.

File format

Rules are plain-text files. You can use Markdown formatting for readability, but it is not required. The AI reads the content regardless of formatting.

Write effective rules

The most useful rules are specific, actionable, and concise. Below are guidelines for writing rules that produce consistent results, followed by ready-to-use examples across common testing scenarios.

Be specific, not vague

The AI interprets rules literally. Vague rules lead to inconsistent behaviour.

| Weak rule | Strong rule |

|---|---|

| Write good tests | Every test method must contain at least one assertion. Use descriptive assertion messages that explain what the expected outcome is. |

| Use proper waits | Use WebDriverWait with ExpectedConditions for all element interactions. Never use Thread.sleep() or implicit waits. Set the default wait timeout to 10 seconds. |

| Follow our structure | Place test files in src/test/java/com/myapp/tests/. Place Page Object classes in src/test/java/com/myapp/pages/. Name test classes with the Test suffix (e.g., LoginPageTest). |

Tell the AI what to do and what not to do

Including explicit prohibitions prevents common mistakes.

Always:

- Use @FindBy annotations for element locators in Page Objects

- Return the Page Object class from action methods (for method chaining)

- Add JavaDoc comments to public methods in Page Objects

Never:

- Use Thread.sleep() for waiting. Use explicit waits instead

- Hard-code test data inside test methods. Use data providers or external files

- Modify files in the /config or /infrastructure directories

Keep rules focused

A rule file should cover a single topic. If your instructions span multiple unrelated areas (coding standards, folder structure, CI pipeline, and API guidelines), split them into separate rule files. This makes rules easier to maintain, share, and debug when the AI behaves unexpectedly.

Example rules for common scenarios

Example 1: Selenium + Java coding standards

File name: selenium-java-standards

Selenium Java Test Standards

Framework

- Use Selenium WebDriver 4.x with Java 17

- Use TestNG as the test runner

- Use the Page Object Model (POM) pattern for all UI interactions

Assertions

- Use TestNG Assert for all assertions

- Every test method must contain at least one assertion

- Provide descriptive messages: Assert.assertEquals(actual, expected, “Login page title should match”)

Waits

- Use WebDriverWait with ExpectedConditions for element interactions

- Default timeout: 10 seconds

- Never use Thread.sleep() under any circumstances

- Never use implicit waits

Element locators

- Prefer data-testid attributes over CSS classes or XPaths

- If data-testid is not available, use CSS selectors

- Use XPath only as a last resort and add a comment explaining why

Test independence

- Each test must be fully independent — no test may depend on the execution or outcome of another test

- Use @BeforeMethod for setup and @AfterMethod for teardown

- Do not share mutable state between test methods

Example 2: Pytest + Python project conventions

File name: pytest-conventions

Pytest Project Conventions

Language and framework

- Python 3.11 with Pytest

- Use pytest-html for reporting

- Use pytest-xdist for parallel execution

File and naming

- Test files: test_

.py (example: test_checkout.py) - Test functions: test_

_ (example: test_login_with_valid_credentials_redirects_to_dashboard) - Page Object classes:

Page (example: CheckoutPage) - Fixtures: define shared fixtures in conftest.py at the tests/ root

Folder structure

- tests/ — all test files

- tests/pages/ — Page Object classes

- tests/utils/ — helper functions and custom waits

- tests/data/ — test data files (JSON, CSV)

- tests/conftest.py — shared fixtures

Patterns

- Use @pytest.fixture for setup/teardown, not setup_method/teardown_method

- Use @pytest.mark.parametrize for data-driven tests

- Group related tests into classes when they share fixtures

- Use explicit waits with Selenium’s WebDriverWait, never time.sleep()

Example 3: Enforce accessibility testing standards

File name: accessibility-rules

Accessibility Testing Standards

When generating or reviewing test cases for any web page, always include the following accessibility checks:

- Verify that all images have meaningful alt text (empty alt=”” is acceptable only for decorative images)

- Verify that all form inputs have associated

- Verify that the page can be navigated using only the keyboard (Tab, Shift+Tab, Enter, Space, Escape)

- Verify that focus indicators are visible on interactive elements

- Verify that colour contrast ratios meet WCAG 2.1 AA standards (minimum 4.5:1 for normal text, 3:1 for large text)

When writing automation code that checks accessibility:

- Use axe-core as the accessibility scanning engine

- Run accessibility scans after the page has fully loaded and all dynamic content has rendered

- Exclude known third-party widget violations (tag them as “third-party” for tracking, but do not fail the test)

Example 4: Protect sensitive areas of the codebase

File name: off-limits

Protected Files and Directories

Do not read, modify, create, or delete files in any of these locations:

- /infrastructure/ — managed by the DevOps team

- /config/production/ — production configuration (staging config is OK)

- /.github/workflows/ — CI/CD pipelines

- /database/migrations/ — database migrations require DBA review

If a task requires changes in any of these areas, stop and explain what changes would be needed so I can make them manually.

Manage rules

View and edit rules

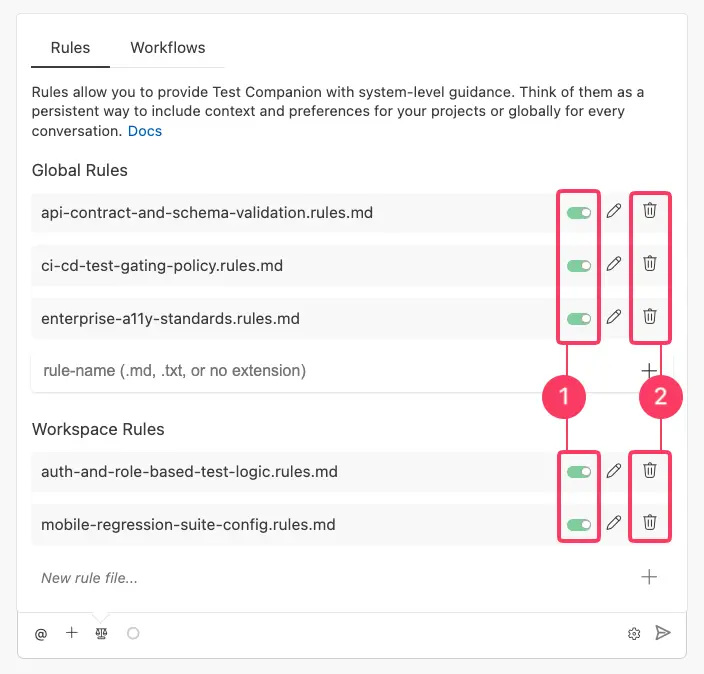

Click the Manage Test Companion Rules & Workflows button to see all your active rules. Click any rule file name to open it in the editor. Changes are applied the next time you start a new task.

Disable or delete a rule

To disable a rule, click the toggle switch (see annotation 1) next to the rule name in the Rules panel. A disabled rule remains in your list but is not applied to conversations. You can re-enable it at any time by clicking the toggle again.

To permanently delete a rule, click the delete icon (see annotation 2) next to the rule name. After you delete it, the rule file is removed and cannot be recovered.

Rules vs. System Instructions

Both Rules and System Instructions provide background context to the AI, but they serve different purposes:

| Rules | System Instructions | |

|---|---|---|

| Where defined | Separate files managed from the Rules panel | A single text field in Settings → Chat Settings |

| Scope | Global (all projects) or Workspace (one project) | Applies to all conversations in the extension |

| Best for | Specific, actionable instructions (coding standards, naming conventions, prohibitions) | High-level environment description (framework, language, folder structure) |

For best results, use System Instructions to describe your environment (what you are working with) and Rules to describe your standards (how you want things done). The AI combines both when generating output.

We're sorry to hear that. Please share your feedback so we can do better

Contact our Support team for immediate help while we work on improving our docs.

We're continuously improving our docs. We'd love to know what you liked

We're sorry to hear that. Please share your feedback so we can do better

Contact our Support team for immediate help while we work on improving our docs.

We're continuously improving our docs. We'd love to know what you liked

Thank you for your valuable feedback!