Fix failed tests

Analyze test failures and get suggested code fixes without leaving the IDE.

Test Companion connects to BrowserStack Test Reporting & Analytics (TRA) and pulls failure data directly into your IDE. It analyzes the error, identifies the likely cause, and suggests a code fix. You can start the analysis from the Test Reporting panel or directly from the Test Companion chat box. The chat box accepts a Test Reporting session URL or ID, an error message or stack trace, a plain-language description of the failure, or any combination of these.

Fix from the Test Reporting panel

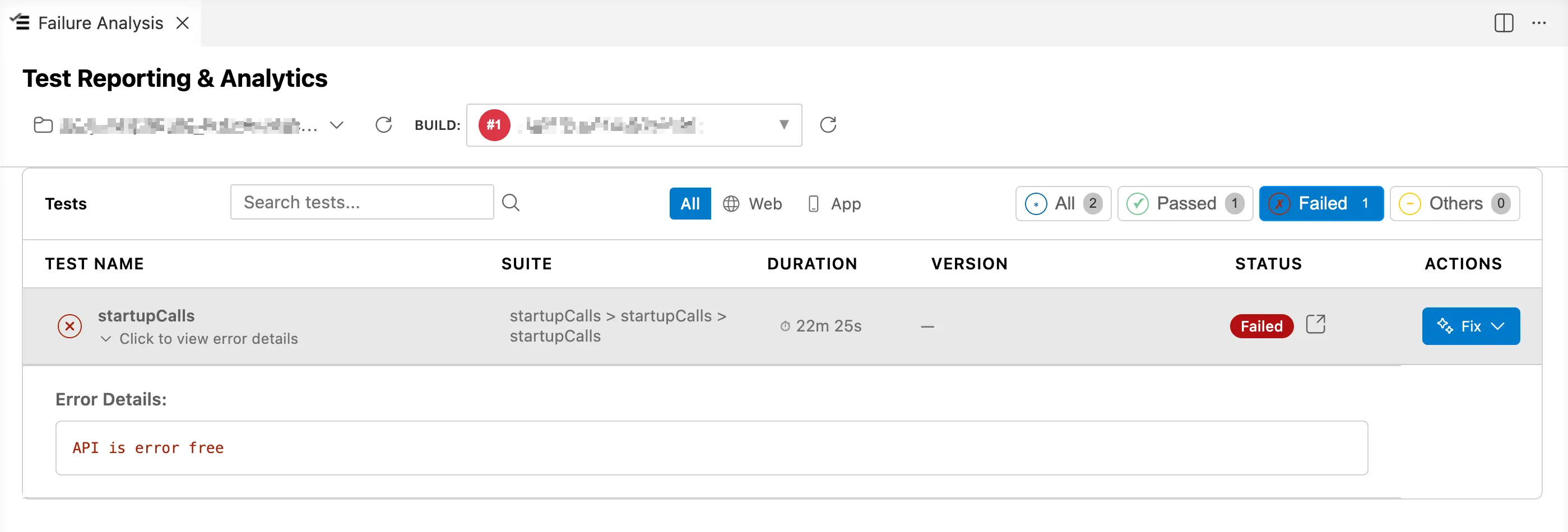

Use this method if you have a BrowserStack Test Reporting and Analytics subscription. The process starts in the Failure Analysis panel in the IDE.

-

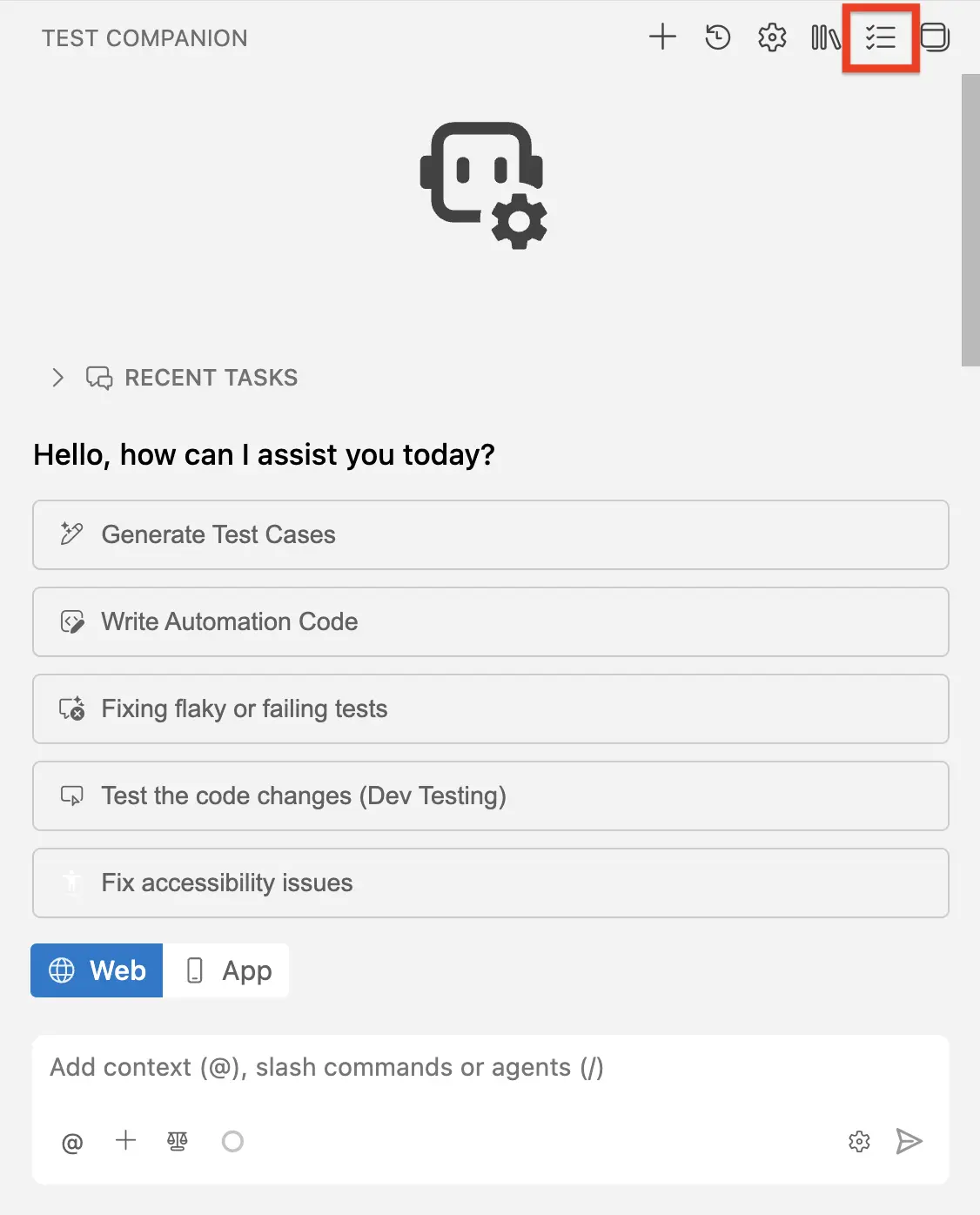

Click the Failure Analysis icon in the Test Companion panel.

- From the project dropdown, select the project that contains your build.

- From the Build dropdown, select the build run that contains your failures.

- Filter the test list by clicking Failed to show only failed tests.

- Find the test you want to fix. Click Click to view error details to expand the error message.

- In the Actions column, click the Fix:

- Test Companion: Sends the failure context to the Test Companion chat.

- Select Test Companion.

The system fetches the Root Cause Analysis (RCA) for the selected test. A loading indicator shows Fetching RCA while the analysis is retrieved.

When the RCA is ready, Test Companion switches to the chat panel and pre-populates the chat input with a structured prompt that includes the test name, suite path, error logs, failure type classification, root cause description, log evidence, evidence strength rating, impact assessment, and a suggested fix. Review the pre-populated prompt and press Enter to start the analysis.

Test Companion reads the RCA, identifies the likely cause, and suggests a code fix in the chat. Review the suggestion, apply it, and run the build again.

Fix from the Automate dashboard

Use this method if you are reviewing build results in the BrowserStack Automate dashboard and want to fix a failure without switching context manually. This method also requires a BrowserStack Test Reporting and Analytics subscription.

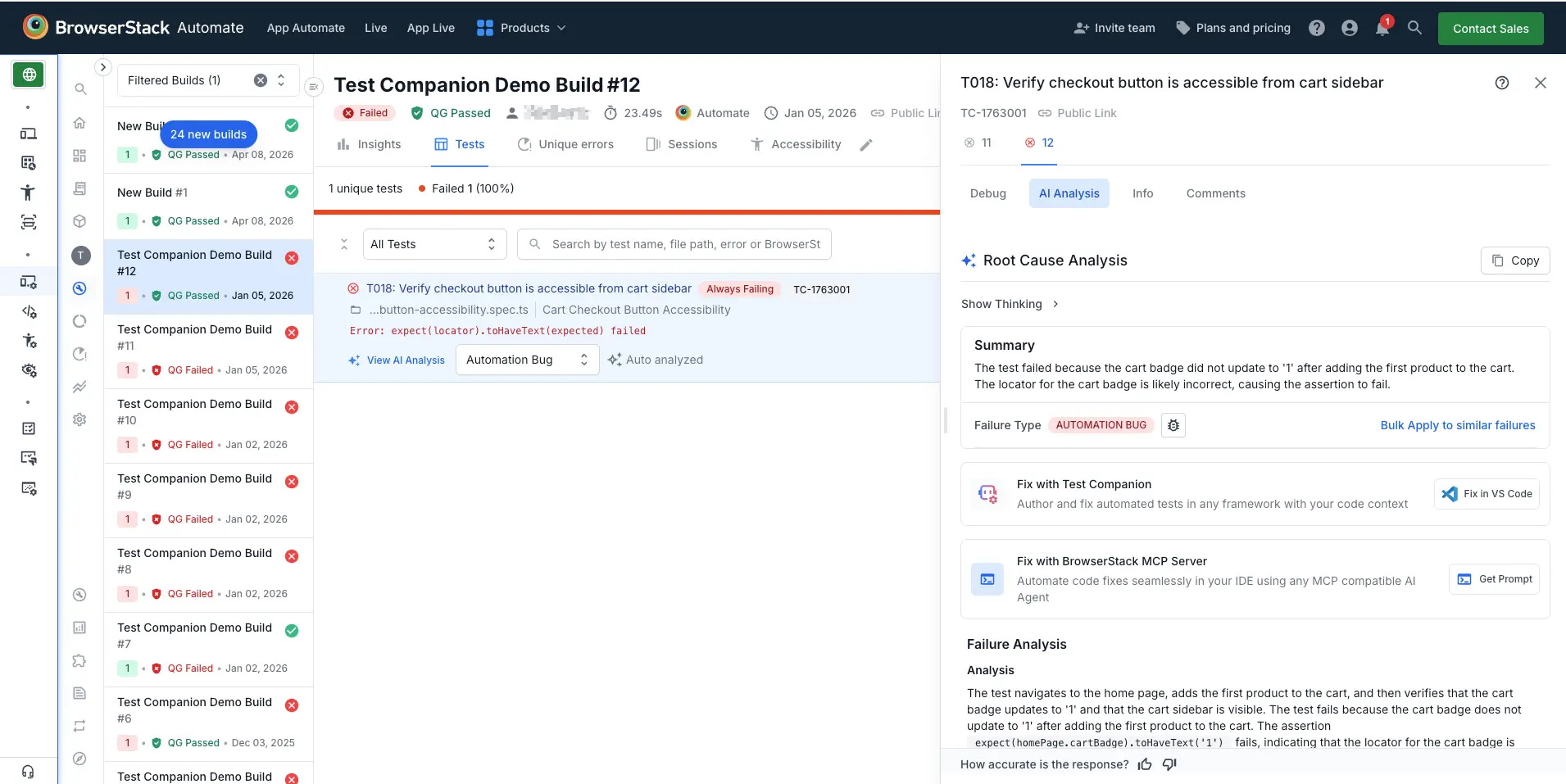

- Open the BrowserStack Automate dashboard in your browser.

- Select the build that contains the failed test.

- Click the Tests tab to view the test list.

- Select the failed test. The test detail panel opens on the right side of the screen.

- Click the AI Analysis tab.

The AI Analysis tab displays a Root Cause Analysis that includes a summary of why the test failed, a failure type classification (for example, Automation Bug or Product Bug), and a detailed failure analysis.

From the AI Analysis panel, choose:

- Fix with Test Companion: Click Fix in VS Code. Test Companion opens in your IDE with the failure context pre-loaded. It reads the root cause analysis, locates the relevant test file in your project, and suggests a code fix.

If the same root cause affects multiple tests in the build, click Bulk Apply to similar failures to apply the failure type classification across all matching tests. Then fix the shared root cause once in your test code.

Fix from the chat

Use this method to start failure analysis from the Test Companion chat box. The chat accepts several input types, alone or in combination. The method works for failures captured in Test Reporting and for failures you encountered locally.

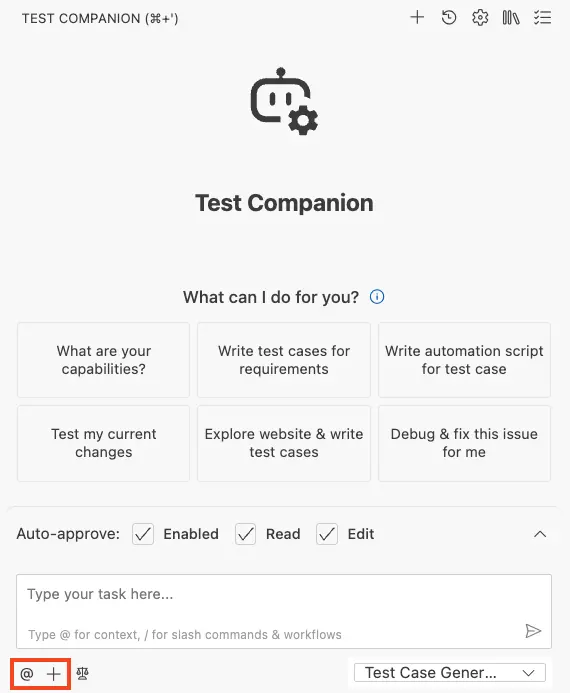

- Open the Test Companion panel in the IDE.

-

In the chat box, paste either of the following:

- A Test Reporting session URL or ID, to pull the full failure context (test name, error, stack trace, and metadata).

- An error message or stack trace pasted from your test output.

- A description of the failure in plain language.

- For more accuracy, type

@and attach the relevant test file, source file, or terminal output. - Press Enter to send.

Test Companion analyzes the failure context, identifies the likely cause, and suggests a code fix in the chat. When you provide a Test Reporting session URL or ID, Test Companion fetches the same structured failure data the panel flow uses.

Paste one session link or ID per message. To analyze multiple failures, send them in separate messages so Test Companion can keep each diagnosis distinct.

Review and apply fixes

Regardless of which method you use, Test Companion delivers the fix as a code suggestion in the chat. The suggestion includes:

- Root cause analysis: A brief explanation of why the test is failing.

- Suggested code change: The specific lines to modify, with before-and-after context.

- Confidence notes: If the fix involves assumptions (for example, a changed selector or a timing issue), Test Companion flags them.

After you apply the fix, run your test suite again to confirm the issue is resolved.

Example prompts

- From a Test Reporting session URL or ID:

https://observability.browserstack.com/projects/<PROJECT>/builds/<BUILD>/sessions/<SESSION_ID>

Or paste the ID alone on its own line:

<SESSION_ID>

- Combining a session with extra context:

https://observability.browserstack.com/projects/<PROJECT>/builds/<BUILD>/sessions/<SESSION_ID>

- With pasted error context:

Fix this test failure:

Error: expect(received).toBe(expected) // Object.is equality

Expected: 200

Received: 401

The test file is @tests/api/auth.spec.ts

- Describing the failure:

My login test is failing because the redirect URL changed from /home to /dashboard

after our last release. Update the test assertion.

- Broad failure analysis:

I have 5 failing tests from my last build. The common error is a timeout on element

selectors. What is likely wrong and how do I fix it?

Common failure types

Following are some common failure types that Test Companion can help fix:

- Stale selectors: An element ID or class changed after a UI redesign. Test Companion updates the selector to match the new element.

- Timing issues: A test runs before an element is visible or clickable. Test Companion adds an explicit wait or adjusts the wait strategy.

- Flaky behavior: A test passes intermittently due to a race condition. Test Companion identifies the race and adds stabilization logic.

- Genuine application bugs: The application behavior is broken. Test Companion confirms the bug and explains what is wrong, rather than patching around it.

Example scenarios

These scenarios show how Test Companion handles real-world failure patterns.

UI redesign breaks selectors

Your navigation header was redesigned. 23 Selenium tests now fail with “element not found” errors because button IDs have changed. Paste the error output and reference the test files with @. Test Companion maps each old selector to its new equivalent and generates a patch file with all updated selectors.

Intermittent (flaky) test failure

A test passes 70% of the time but fails 30% of the time with “Expected element to be visible.” Test Companion identifies a race condition where the test clicks a button before an API call completes. It replaces the hard-coded wait with an explicit wait for network idle and adds a retry strategy for the assertion.

API contract change breaks assertions

The backend team split the full_name field into first_name and last_name. Three test files that assert the presence of full_name now fail. Reference the test files with @ and describe the API change. Test Companion updates every affected assertion across all three files and adjusts the test data fixtures.

Test fails only in CI, passes locally

Your checkout flow test passes locally but times out in CI. Test Companion identifies that the test relies on a local environment variable for the API base URL that is not set in CI. It suggests adding the variable to the CI configuration and updating the test to fail fast with a clear error when the variable is missing.

Best practices

Following are some best practices to get the most accurate and effective fixes from Test Companion:

- Provide full error output: The more context you give (stack trace, error message, logs), the more accurate the diagnosis.

- Prefer a Test Reporting session URL or ID over typed error text when you have one: A session link or ID gives Test Companion the full structured failure context (test name, error, stack trace, metadata) in one paste. Typing or summarizing the error often drops detail that affects the diagnosis.

- Reference the test file with @: Including the failing test file gives Test Companion access to the actual code, not only the error message. Fix one issue at a time: If multiple tests are failing for different reasons, address them individually for cleaner, more targeted fixes.

Next steps

- Automate tests: If the failed test needs to be rewritten, regenerate the automation script.

- AI settings: Configure auto-approve to let Test Companion apply fixes automatically.

We're sorry to hear that. Please share your feedback so we can do better

Contact our Support team for immediate help while we work on improving our docs.

We're continuously improving our docs. We'd love to know what you liked

We're sorry to hear that. Please share your feedback so we can do better

Contact our Support team for immediate help while we work on improving our docs.

We're continuously improving our docs. We'd love to know what you liked

Thank you for your valuable feedback!