Identifying bugs is crucial in the testing process. When you find a bug, it is essential to report it for it to be fixed properly. Writing a bug report is thus a crucial stage of the bug lifecycle, which comes right after it is identified.

Overview

Components of a Bug Report:

- Title/Bug ID

- Environment

- Steps to Reproduce a Bug

- Expected Result

- Actual Result

- Visual Proof (screenshots, videos, text) of Bug

- Severity/Priority

Do’s for Creating a Bug Report

- Be Clear and Specific

- Provide Detailed Steps to Reproduce

- Include Relevant Environment Details

- Use Screenshots and Attachments

- Assign Correct Severity and Priority

- Be Objective

Don’ts for Creating a Bug Report

- Avoid Vague Descriptions

- Skip Essential Details

- Use Complex Language or Jargon

- Include Irrelevant Information

- Assign Incorrect Severity/Priority

- Make Assumptions

This stage lays the foundation for Debugging and ensures a bug-free user experience. This guide explores what is a bug report and how can you write a bug report effectively.

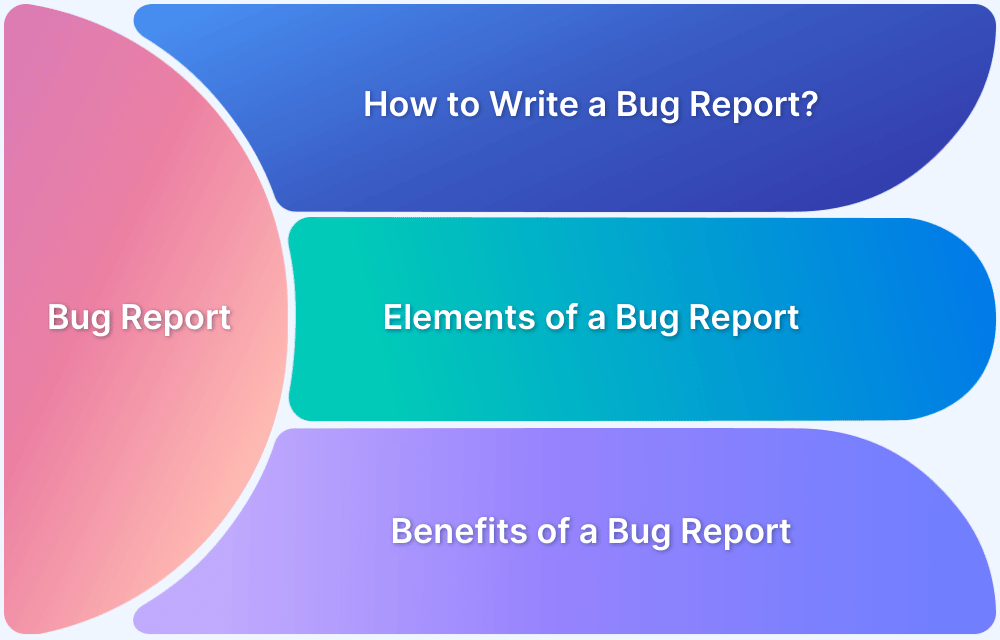

What is a Bug Report?

A Bug Report is a document that details an issue, defect, or flaw found in a software application. Its primary purpose is to inform developers and other stakeholders about the problem so that it can be investigated and resolved.

A bug report typically includes information such as a description of the issue, steps to reproduce it, the expected and actual outcomes, and details about the environment in which the bug was found. A well-crafted bug report is essential for efficient debugging and helps improve the overall quality of the software.

Read More: How to set up a Bug Triage Process?

Benefits of a good Bug Report

A good bug report covers all the crucial information about the bug, which can be used in the debugging process:

- It helps with a detailed bug analysis.

- Gives better visibility about the bug and helps find the right direction and approach towards debugging.

- Saves cost and time by helping debug at an earlier stage.

- Prevents bugs from going into production and disrupting end-user experience.

- Acts as a guide to help avoid the same bug in future releases.

- Keeps all the stakeholders informed about the bug, helping them take corrective measures.

Elements of an Effective Bug Report

When studying how to create a bug report, start with the question: What does a bug report need to tell the developer?

A bug report should be able to answer the following questions:

- What is the problem?

- How can the developer reproduce the problem (to see it for themselves)?

- Where in the software (which webpage or feature) has the problem appeared?

- What is the environment (browser, device, OS) in which the problem has occurred?

Want to do a quick bug check on your website across browsers & devices? Try Now.

Components of a Bug Report

An effective bug report should contain the following:

Essential Parts of Bug Report:

- Title/Bug ID

- Environment

- Steps to reproduce a Bug

- Expected Result

- Actual Result

- Visual Proof (screenshots, videos, text) of Bug

- Severity/Priority

1. Title/Bug ID

The title should provide a quick description of the bug. For example, “Distorted Text in FAQ section on <name> homepage”.

Assigning an ID to the bug also helps to make identification easier.

2. Environment

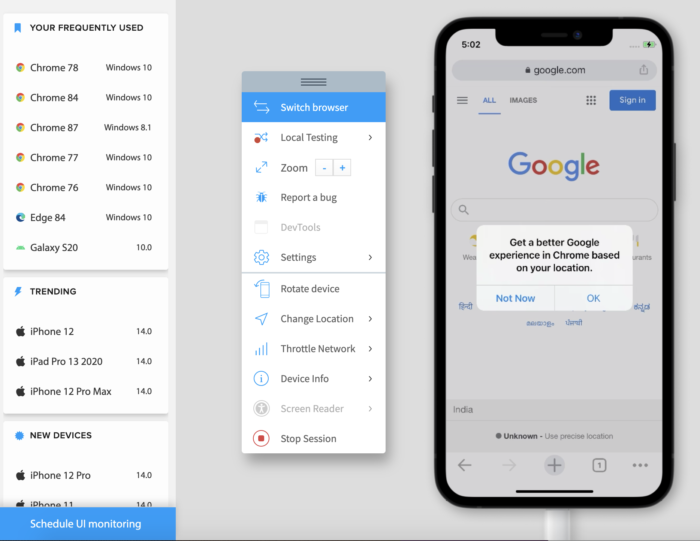

A bug can appear in a particular environment and not others. For example, a bug appears when running the website on Firefox, or an app malfunctions only when running on an iPhone X. These bugs can only be identified with cross browser testing or cross device tests.

When reporting the bug, QAs must specify if the bug is observed in one or more specific environments. Use the template below for specificity:

- Device Type: Hardware and specific device model

- OS: OS name and version

- Tester: Name of the tester who identified the bug

- Software version: The version of the software which is being tested, and in which the bug has appeared.

- Connection Strength: If the bug is dependent on the internet connection (4G, 3G, WiFi, Ethernet) mention its strength at

the time of testing. - Rate of Reproduction: The number of times the bug has been reproduced, with the exact steps involved in each reproduction.

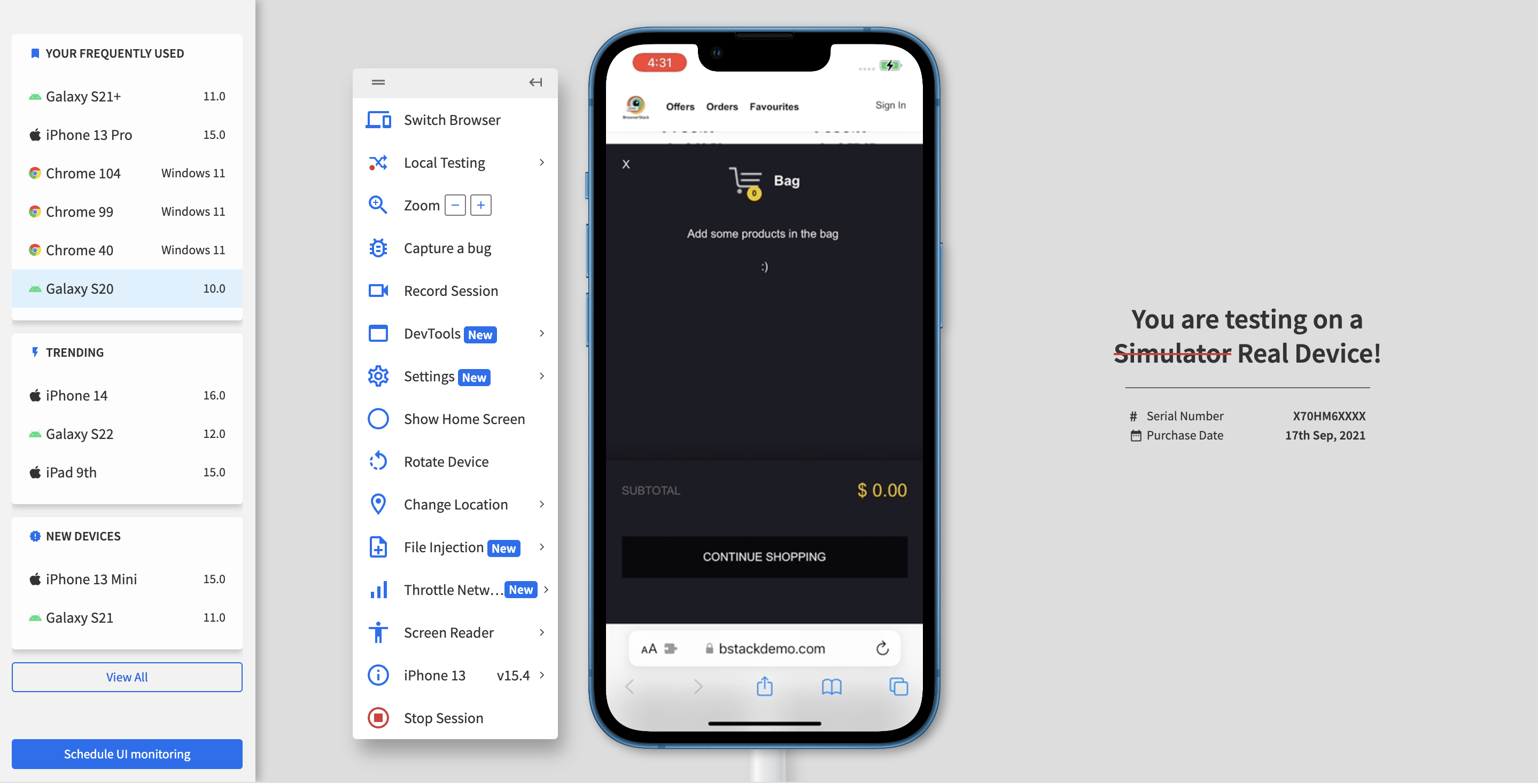

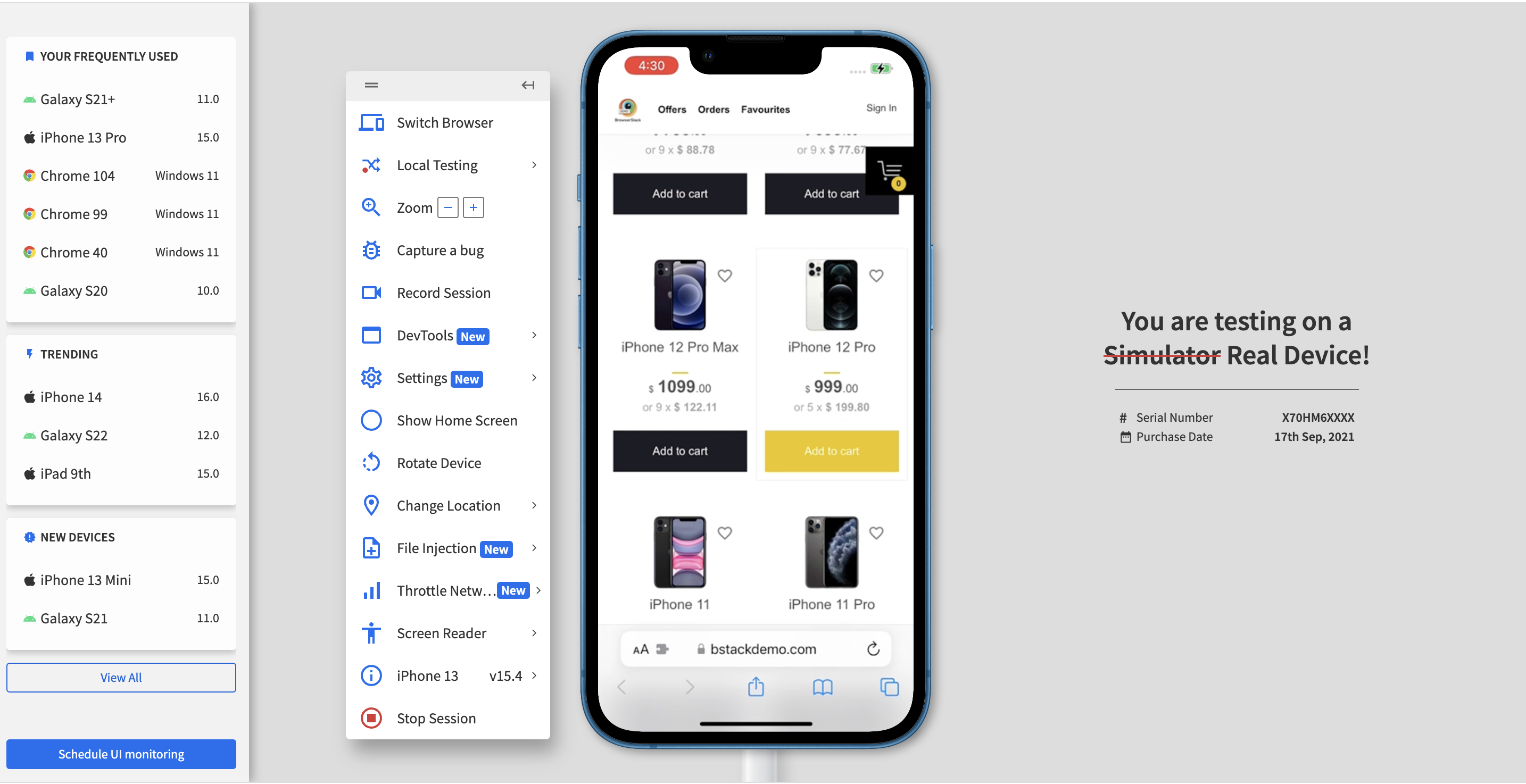

Reporting bugs is an effortless task on BrowserStack’s real device cloud.

Run Tests across Environments for Free

3. Steps to Reproduce a Bug

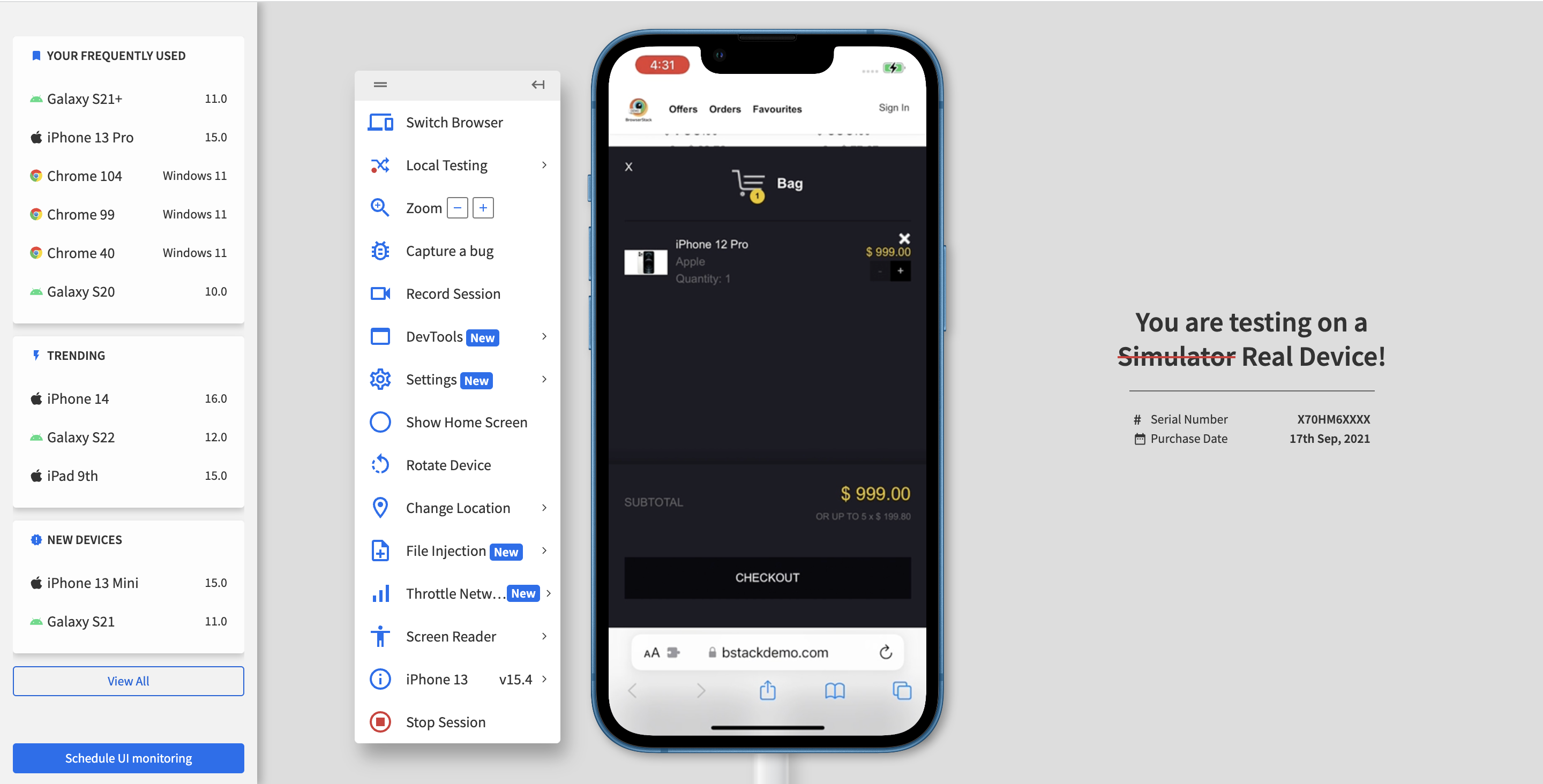

Number the steps clearly from first to last so that the developers can quickly and exactly follow them to see the bug for themselves. Here is an example of how one can reproduce a bug in steps:

- Click on the “Add to Cart” button on the Homepage (this takes the user to the Cart).

- Check if the same product is added to the cart.

Find out everything about Bug Tracking before starting the QA process.

4. Expected Result

This component of Bug Report describes how the software is supposed to function in the given scenario. The developer gets to know what the requirement is from the expected results. This helps them gauge the extent to which the bug is disrupting the user experience.

Describe the ideal end-user scenario, and try to offer as much detail as possible. For the above example, the expected result should be:

“The selected product should be visible in the cart.”

5. Actual Result

5. Actual Result

Detail what the bug is actually doing and how it is a distortion of the expected result.

- Elaborate on the issue

- Is the software crashing?

- Is it simply pausing in action?

- Does an error appear?

- Or is it simply unresponsive?

Specificity in this section will be most helpful to developers. Emphasize distinctly on what is going wrong. Provide additional details so that they can start investigating the issue with all variables in mind, such as:

- “Link does not lead to the expected page. It shows a 404 error.”

- “When clicked, the button does not do anything at all.”

- “The main image on the homepage is distorted on the particular device-browser combination.”

Continuing the above example, the actual result in case of a bug will be

“The Cart still remains empty, and the selected Mobile device is not added to the cart.”

Do you know the 5 Common Bugs Faced in UI Testing

6. Visual Proof of Bug

Screenshots, videos of log files must be attached to clearly depict the occurrence of the bug. Depending on the nature of the bug, the developer may need video, text, and images.

Testing using BrowserStack can leverage multiple debugging options such as text logs, visual logs (screenshots), video logs, console logs, and network logs. These make it easy for QAs and devs to detect exactly where the error has occurred, study the corresponding code and implement fixes.

BrowserStack’s debugging toolkit makes it possible to easily verify, debug and fix different aspects of software quality, from UI functionality and usability to performance and network consumption.

The range of debugging tools offered by BrowserStack’s mobile app and web testing products are as follows:

- Live: Pre-installed developer tools on all remote desktop browsers and Chrome developer tools on real mobile devices (exclusive on BrowserStack

- Automate: Screenshots, Video Recording, Video-Log Sync, Text Logs, Network Logs, Selenium Logs, Console Logs

- App Live: Real-time Device Logs from Logcat or Console

- App Automate: Screenshots, Video Recording, Video-Log Sync, Text Logs, Network Logs, Appium Logs, Device Logs, App Profiling

7. Bug Severity

Every bug must be assigned a level of severity and corresponding priority. This reveals the extent to which the bug affects the system, and in turn, how quickly it needs to be fixed.

Levels of Bug Severity:

- Low: Bug won’t result in any noticeable breakdown of the system

- Minor: Results in some unexpected or undesired behavior, but not enough to disrupt system function

- Major: Bug capable of collapsing large parts of the system

- Critical: Bug capable of triggering complete system shutdown

Levels of Bug Priority:

- Low: Bug can be fixed at a later date. Other, more serious bugs take priority

- Medium: Bug can be fixed in the normal course of development and testing.

- High: Bug must be resolved at the earliest as it affects the system adversely and renders it unusable until it is resolved.

Ensure that QA teams have sufficient knowledge of bug severity and bug priority before they start assigning levels in a bug report.

How to write a Bug Report?

Writing an effective bug report is a crucial part of the software development lifecycle. Here’s a step-by-step guide to crafting a bug report that gets results:

Step 1: Craft a Clear and Informative Title

- Be Specific and Descriptive: The title should provide a concise summary of the issue. Avoid vague terms and focus on the core problem.

- For example: “Login Page Error 403 When Accessing via Firefox 115.”

Step 2: Provide a Detailed Summary

- Offer a Brief Overview: Write a summary that encapsulates the bug’s nature and impact. This section should give a quick understanding of the problem’s significance and context.

- For example: “When attempting to log in to the application using Firefox 115, users encounter a 403 Forbidden error, preventing access to their accounts.”

Step 3: List Reproduction Steps

- Detail Each Step Precisely: Enumerate the steps required to reproduce the bug. Be clear and detailed to ensure that others can follow the same process.

- For instance:

- Open Firefox 115.

- Navigate to the login page of the application.

- Enter valid user credentials.

- Click the “Login” button.

Step 4: State Expected vs. Actual Results

- Highlight the Discrepancy: Clearly define what should happen and what actually occurs. This helps in identifying the nature of the bug.

- For example:

- Expected Result: User should be successfully logged in and redirected to the dashboard.

- Actual Result: An error 403 message is displayed, and the login attempt fails.

Step 5: Describe the Environment

- Specify the Context: Include details about the environment where the bug was encountered, such as:

- Operating System: Windows 10

- Browser: Firefox 115

- Application Version: 2.1.0

Step 6: Attach Error Messages and Logs

- Provide Relevant Information: Include any error messages, codes, or logs that appear when the bug occurs. This information is essential for troubleshooting.

- For example:

- Error Message: “403 Forbidden”

- Logs: [Attach relevant log files or screenshots]

Step 7: Include Visual Evidence

- Add Screenshots or Videos: Attach screenshots or a video that demonstrates the bug. Visual evidence can provide additional context and help in understanding the issue more clearly.

Step 8: Assess Severity and Priority

- Determine Impact and Urgency: Indicate the severity and priority of the bug to help with triaging and resolution.

- Severity Levels:

- Low: The bug has minimal impact and does not noticeably affect system performance.

- Minor: Causes some unexpected behavior but does not disrupt overall system functionality.

- Major: Affects significant parts of the system, leading to substantial issues.

- Critical: Can cause a complete system shutdown or major breakdown.

- Priority Levels:

- Low: Fixing the bug can be deferred; focus is on more severe issues first.

- Medium: Bug can be addressed during regular development and testing cycles.

- High: Requires immediate resolution due to its severe impact on system usability.

- For example:

- Severity: Critical (Critical functionality is blocked)

- Priority: High (Needs immediate attention)

Step 9: Provide Additional Context

- Add Any Relevant Details: Include any extra information that might be useful, such as the frequency of the issue or recent changes that could be related.

- For example:

- Frequency: Occurs every time a login attempt is made in Firefox 115.

- Recent Changes: “Recent updates to authentication logic.”

Step 10: Confirm Reproducibility

- Verify Consistency: Indicate whether the issue can be consistently reproduced or if it happens intermittently. This helps in understanding the bug’s stability and potential causes.

Step 11: Assign and Follow Up

- Route to the Appropriate Person: If using a bug tracking system, assign the report to the relevant developer or team. Follow up to ensure that the bug is being addressed and resolved effectively.

Bug Report Template

Here is a bug report template that can be used as a reference to create bug reports.

| Field | Details |

|---|---|

| Title/Summary | [Clear and concise description of the bug] |

| Description | [Detailed explanation of the issue and its impact] |

| Steps to Reproduce | 1. [Step 1] 2. [Step 2] 3. [Step 3] 4. [Step 4] |

| Expected Result | [Describe what should happen] |

| Actual Result | [Describe what actually happens] |

| Environment | Operating System: [e.g., Windows 10, macOS 14] Browser/App Version: [e.g., Chrome 93, App v3.2.1] Device: [e.g., Desktop, iPhone X] |

| Severity | [Low / Medium / High / Critical] |

| Priority | [Low / Medium / High / Urgent] |

| Attachments | [Screenshots, logs, videos, or console output] |

Example of a Bug Report

Here is an example of a bug report:

Title/Summary: “Login button unresponsive after entering valid credentials in Chrome browser.”

Description: The login button on the web application becomes unresponsive when clicked after entering valid username and password credentials. The issue is observed only in the Chrome browser and prevents users from logging into their accounts. No error messages are displayed, and the page remains static.

Steps to Reproduce:

- Open the Chrome browser and navigate to the web application’s login page.

- Enter a valid username in the “Username” field.

- Enter a valid password in the “Password” field.

- Click on the “Login” button.

Expected Result: The user should be successfully logged into the application and redirected to the dashboard.

Actual Result: The login button does not respond when clicked, and the user remains on the login page without being logged in. No error message or indication of failure is provided.

Environment:

- Operating System: Windows 10

- Browser: Google Chrome (Version 93.0.4472.12)

- Device: Desktop

- App Version: 3.2.1

Severity: High (critical functionality blocked)

Priority: High (needs to be fixed urgently as it affects user login)

Attachments:

- Screenshot of the login page with the unresponsive button

- Chrome DevTools console log showing no errors

- Video demonstrating the issue

Best Practices to follow while creating a Bug Report

Here are some Do’s and Don’ts for creating a clear and effective Bug Report:

Do’s for creating a Bug Report:

1. Be Clear and Specific

- Do: Write clear, concise, and specific descriptions of the bug.

- Example: “App crashes when clicking the ‘Submit’ button on the feedback form.”

2. Provide Detailed Steps to Reproduce

- Do: List step-by-step instructions that clearly show how to reproduce the bug.

- Example: “1. Open the app. 2. Navigate to the feedback form. 3. Fill in the form and click ‘Submit’.”

3. Include Relevant Environment Details

- Do: Mention the environment where the bug was found, including OS, browser version, device type, and app version.

- Example: “Tested on iPhone 12, iOS 14.5, App version 2.3.1.”

4. Use Screenshots and Attachments

- Do: Add screenshots, logs, or videos to provide visual evidence or additional context.

- Example: Attach a screenshot showing the error message or a video demonstrating the issue.

5. Assign Correct Severity and Priority

- Do: Assess and assign appropriate severity and priority levels to help prioritize the bug.

- Example: “Severity: High, Priority: High.”

6. Be Objective

- Do: Stick to the facts without making assumptions or including personal opinions.

- Example: “The login button is unresponsive” instead of “The login button is broken.”

Don’ts for creating a Bug Report:

1. Avoid Vague Descriptions

- Don’t: Write unclear or vague descriptions that don’t convey the specific issue.

- Example: “Something is wrong with the login page.”

2. Skip Essential Details

- Don’t: Omit critical information like steps to reproduce, environment details, or expected behavior.

- Example: “The app crashed” without explaining how or under what circumstances.

3. Use Complex Language or Jargon

- Don’t: Overcomplicate the report with technical jargon unless necessary.

- Example: Avoid using terms that might not be understood by all team members.

4. Include Irrelevant Information

- Don’t: Add unnecessary details that do not help in understanding or resolving the bug.

- Example: “This bug happened right after I had lunch.”

5. Assign Incorrect Severity/Priority

- Don’t: Mislabel the severity or priority, leading to inappropriate prioritization.

- Example: Labeling a minor UI issue as “Critical” or a crash as “Low Priority.”

6. Make Assumptions

- Don’t: Assume the cause of the bug or speculate about the issue.

- Example: “This bug is probably caused by the last update.”

Conclusion

Creating a bug report requires detailed bug tracking to resolve each & every bug mentioned in the report. But it’s not easier for QA testers to find every bug in the application without testing on real devices and see how end users are getting the results.

That’s why testers are now preferred to use real cloud devices and browsers to test their application because it provides real user conditions while testing so that each and every bug can be found for the defect report.

Using tools like BrowserStack Test Reporting and Analytics you can create, manage and analyze AI-driven test reports with its centralized dashboard. You can maintain a centralized repository for all your manual and automated test execution results for better visibility and control over the testing process.

BrowserStack Test Reporting and Analytics allows you to write AI-powered detailed Defect Reports and track defects for effective and faster debugging.

Needless to say, it is not possible to detect every possible bug without running tests on real devices. Additionally, one has to know how frequently a bug occurs and the extent of its impact on the software. Testers cannot report bugs they have not caught during tests.

Find out: How to rectify visual bugs?

The best way to detect all bugs is to run software through real devices and browsers. Ensure that software is run through both manual testing and automation testing. Automated Selenium testing should supplement manual tests so that testers do not miss any bugs in the Quality Assurance process.

In the absence of an in-house device lab, the best option is to opt for a cloud-based testing service that provides real device browsers and operating systems. BrowserStack offers 3500+ real browsers and devices for manual and automated testing. Users can sign up, choose desired device-browser-OS combinations and start testing.

The same applies to apps. BrowserStack also offers real devices for mobile app testing and automated app testing. Simply upload the app to the required device-OS combination and check to see how it functions in the real world.

Knowing how to write a detailed report makes life easier for testers themselves. If developers have all the information they need regarding a bug, they don’t have to keep reaching out for information from the QAs. This streamlines the debugging process reduces unnecessary delays and ensures that software hits production as soon as possible.

5. Actual Result

5. Actual Result