A well-designed UI draws users in, and visual testing ensures it renders correctly across browsers and devices. Teams can catch visual defects early by combining automated visual regression tests, stable baselines, and CI/CD integration.

Overview

Core Strategies to Optimize Visual Testing

- Automated visual regression testing: Compare new screenshots with a stable baseline and run tests in parallel across browsers/devices.

- Baseline management: Capture baselines only when stable, and update them for intentional, documented UI changes.

- CI/CD integration: Trigger visual tests on every build and block releases when critical diffs appear.

- Tolerance settings: Set thresholds to filter minor rendering noise and ignore dynamic elements.

- Cross-team review: Designers, developers, and QA review diffs together to confirm design accuracy.

Implementation of Best Practices

- Select the right tool: Use solutions that integrate with your frameworks, handle dynamic content, and offer clear diff reports.

- Start early: Add visual checks at component or unit level to catch issues sooner.

- Review and document: For each failure, confirm whether it’s a bug or intended change, then fix or update baselines.

This guide covers the key strategies and tools to optimize visual testing and deliver a consistent, high-quality UI.

What are Visual Testing Strategies?

Visual testing strategies are methods used to ensure the visual integrity of a software application across different devices, browsers, and screen sizes. They help identify visual defects and layout issues that could negatively impact user experience.

These strategies focus on the best methods to detect discrepancies, how an application responds to user interactions, and verify accessibility standards to accommodate users with disabilities.

Effective visual testing strategies are key to enhancing software quality, boosting user satisfaction, and minimizing the risk of visual bugs in production.

Importance of Optimizing Visual Testing Techniques

When trying to solve a problem or execute a task, it is very important to ensure the right tool is used to address it. For example, if you need to chop wood to build a fire, the tool of choice should be picked carefully. For lighter woods and smaller pieces, an axe would be optimal, however, for more dense woods and larger pieces, using a maul would be far more efficient.

Similarly, when planning the testing aspect of application development, it is pertinent to choose and employ the right strategies. If testing is carried out without strategizing, it could be highly inefficient, time-consuming, and cost prohibitive.

What to Focus on in Visual Testing?

Below are some important do’s when it comes to visual testing:

- Ensure detailed specifications for accurate automated visual tests.

- Start visual testing during unit testing to catch design errors early.

- Incorporate both static and dynamic visual testing in your strategy.

- Execute comprehensive visual tests for every screen, orientation, and device.

- Use specialized automation tools for efficient comparison across screens and devices.

- Include visual testing in your CI pipeline for early defect detection.

Read More: Top 17 Visual Testing Tools

What to Avoid in Visual Testing?

Here are some things that should be avoided in visual testing:

- Don’t merge visual testing into functional test automation to avoid inefficiency.

- Don’t rely solely on manual testing for complete visual validation; involve UX professionals.

- Don’t apply visual testing to applications without UIs or for internal-only use.

Popular Visual Test Strategies and Techniques

Visual testing becomes more efficient and reliable when it’s driven by clear strategies, such as automation, parallelization, real-device testing, mobile browser coverage, and broad test coverage. Each of these techniques targets a different dimension of UI validation, helping teams maintain visual integrity across devices, browsers, and builds.

1. Automation

Test Automation is used in practice to automatically review and validate the UI of an application and ensure that it meets predefined standards to optimize functionality and user experience. Automation reduces time, and effort, and mitigates human errors. The automation process ensures that repetitive tasks that need to be performed identically can be completed efficiently and in a timely manner.

However, there’s a common dilemma when using automation: Is the value provided by automating a certain process worth the time, effort, and labor, which could otherwise have been used for further development or other tasks?

Depending on the task at hand, the payoff for automation is generally well worth it. Particularly for visual testing, automation is normally highly helpful and reduces the human labor required to monitor and carry out extensive tests.

Automation frameworks such as Selenium, Cypress, Playwright, TestCafe, WebdriverIO, and Capybara can interact with web browsers easily. They also support integration with Percy to carry out more thorough visual tests. These frameworks can be used with a plethora of languages like Python, C#, Java, Perl, Ruby, JavaScript, etc., with Cypress working seamlessly when used with Node.js. Different automation frameworks can be evaluated and chosen based on the requirements and needs of the developers.

Automated visual testing tools such as BrowserStack’s Percy allow the user to perform snapshot comparisons against a baseline snapshot, identify visual inconsistencies across different popular browsers, and add visual testing to an existing workflow by integrating with pre-existing test automation frameworks or directly with the application. Automated visual testing can be seamlessly carried out before, after, or during functional testing or other code tasks by integrating with Percy.

2. Parallelization

In computer architecture, there is a concept called multithreading. This is the ability to run multiple tasks in a process at the same time, supported by the operating system. Multithreading greatly speeds up most applications’ run time and helps make them more efficient. There is a strategy similar to multithreading often employed in visual testing: Parallelization.

Parallelization is when several automated test scripts are run concurrently against various environment and device configurations, either locally or in the developer’s CI/CD pipeline. Parallel Testing is one of the best ways to speed up the automation testing process; For visual regression testing in particular, parallelization allows for:

- Testing on multiple device emulators or real devices.

- Testing across various different screen resolutions.

- Testing across multiple popular browsers.

- Running these tests for multiple UIs at the same time.

It’s simple to see why parallelization is a popular visual test strategy. If tests are run sequentially, it’s easy to see that it would take much longer to carry out testing without sacrificing the quality of tests. With parallelization, the time it takes to run all your tests will be equivalent to the length of your longest test.

Try it out yourself with the parallel test calculator.

3. Testing on Real Devices

When testing an application, the biggest challenge for any tester is ensuring that the application’s UI is functional and looks as it should across the large variety of devices, OS’, and browsers available. The number of possible combinations of these three factors makes it costly and very difficult for someone carrying out manual testing. Add onto this the number of new devices and OS plus browser versions released every year, and it becomes near impossible.

Now the easiest way to simplify this problem would be to sacrifice some device and OS combinations. And while it may not seem to be a big deal to cut out some of these combinations, there is a risk that the application might be nonfunctional for certain customers. This could, in turn, lead to a loss in revenue, and depending on the number of device/OS combinations sacrificed, this loss could be quite significant.

In order to address the problem of running tests that are able to cover all these device/OS combinations, there are two options:

Testing on emulators is generally not very conducive since emulators don’t always work and behave the way a real device might. Therefore testing with real devices is normally the best possible method to ensure the quality of your visual tests.

Use BrowserStack Real Device Cloud

When testing on real devices, the major caveat is that multiple software needs to be set up, the device needs to be configured, and it’s an overall tedious process. Thankfully there is a better option: using a cloud-based platform.

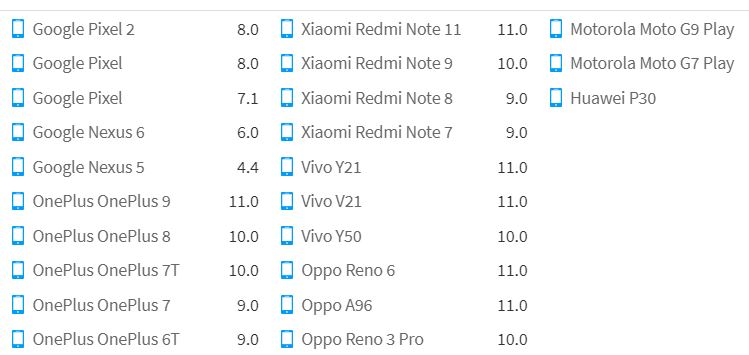

Browserstack’s Real Device Cloud offers testing on 3500+ Real iOS and Android Devices(Phones and Tablets), a few of which are pictured above.

Using Browserstack’s Real Device Cloud makes for a cost-effective and very comprehensive strategy to make more robust visual tests by ensuring that the UI is uniform across a wide range of OS/Real Device combinations.

Run Visual Tests on Real Devices

4. Testing on Mobile Browsers

While testing web applications on various browsers in a desktop environment is a shoo-in when it comes to quality assurance; Testing to determine whether the visual interface is consistent and renders well across different mobile browsers is more infrequent.

Percy bridges an important gap when it comes to web application testing on mobile browsers, it allows QA testers to carry out mobile browser testing. It supports Safari with iOS and Google Chrome with Android, with more mobile browser/OS combinations coming up soon.

Now, you might be thinking mobile browser testing is infrequent for a reason. What’s the need to carry out supplemental testing on mobile browsers when the web application looks fine on desktop browsers?

In 2022 58.62% of global web traffic was established to be mobile web traffic. As of July 2022, the mobile browser market share worldwide stands at 65.16% for Google Chrome and 24.22% for Safari, With Samsung Internet, UC Browser, Opera, and Android taking up the rest of the 10.62%.

The customer can form a negative impression of a web application at a glance, and with over half of the world’s web traffic coming from mobile web browsers, these statistics clearly demonstrate that mobile browser testing is an important aspect of quality assurance for any web application.

5. Coverage

Testing Coverage when carrying out visual testing is imperative to ensure that a sufficient consumer pool is able to access and successfully utilize the application. While testing, there can be a great loss of revenue and customers downstream without sufficient coverage.

Running scripts against several environmental conditions helps the developer obtain a clear picture of how well the application functions across different OS and devices. This process is especially important for visual testing, where the developer needs to make sure the app looks and functions as designed across all operating systems, screen sizes, and screen resolutions.

Visual testing adds another much-needed layer of test coverage to the pre-existing functional tests by also ensuring that the UI visible to the customer doesn’t have any visual regressions present during and after application development.

Percy is a prime visual testing tool, which provides coverage across several popular web browsers and is capable of comparing snapshots and highlighting any visual bugs.

How to Use BrowserStack Percy to Automate Visual Testing?

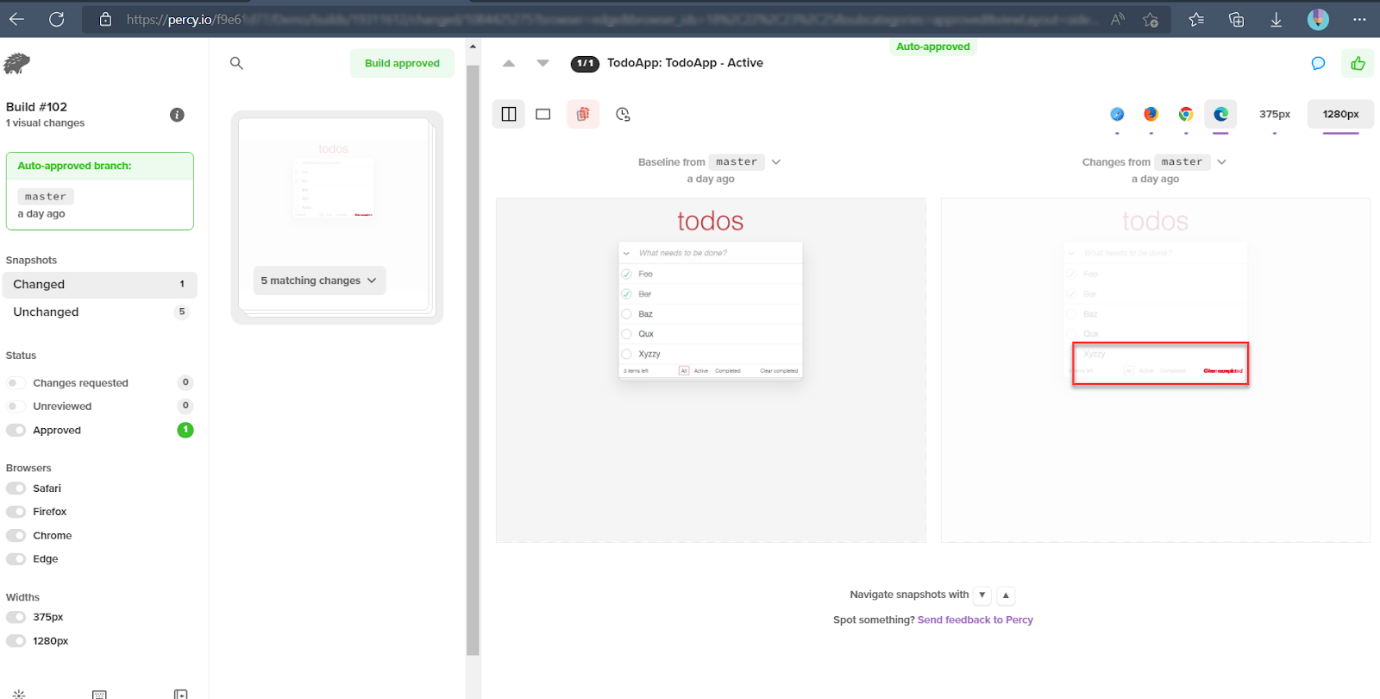

Percy is BrowserStack’s AI-powered visual testing platform that automates visual regression checks across browsers and devices. It is integrated directly into CI/CD workflows, and can capture screenshots on every commit, compares them against baselines, and highlights meaningful layout or styling changes. This helps the teams catch visual defects early with minimal review effort.

Key capabilities include:

- Automated visual regression: Side-by-side snapshot comparisons that flag layout shifts, style changes, and broken UI components.

- AI-driven noise reduction: Percy’s Visual AI Engine automatically ignores dynamic content, animations, and anti-aliasing to reduce false positives and surface only meaningful UI changes.

- Accurate Visual Reviews: Percy’s Visual Review Agent highlights key UI changes with bounding boxes and concise summaries, speeding up diff review.

- No-code visual monitoring: Scan and monitor large sets of URLs across real browsers and devices, and compare environments such as staging vs. production.

- Broad integrations: Works with major test frameworks, CI tools, Storybook, and Figma for shift-left visual testing.

App Percy is BrowserStack’s AI-powered visual testing platform for native mobile apps on iOS and Android. It runs tests on a cloud of real devices to ensure pixel-perfect UI consistency, while AI-driven intelligent handling of dynamic elements helps reduce flaky tests and false positives.

Pricing-

- Free plan with up to 5,000 screenshots/month.

- Paid plans starting at $199/month, with enterprise options available.

Conclusion

The strategies listed above can greatly assist and optimize visual testing procedures.

The technique to successfully design and execute visual tests centers around determining the needs of the application and, of course, the desired customer.

If the aim is only to make the application available on an iPhone 7 with an iOS 15.6 only on a Google Chrome browser, then there is no real need to carry out testing on a large scale across various device and OS combinations.

It would be best to use Automation and Parallelization to increase the efficiency of the visual testing. However, coverage and testing across real devices don’t need to be taken into consideration. To conclude, when planning out visual testing of an application, the chosen visual test technique needs to be bespoke, and utilizing some of the strategies described above should greatly aid in building robust and solid visual tests.

Useful Resources for Visual Testing

- How to capture Lazy Loading Images for Visual Regression Testing in Cypress

- How to Perform Visual Testing for Components in Cypress

- How to run your first Visual Test with Cypress

- How Visual Diff Algorithm improves Visual Testing

- How is Visual Test Automation changing the Software Development Landscape?

- How does Visual Testing help Teams deploy faster?

- How to perform Visual Testing for React Apps

- How to Run Visual Tests with Selenium: Tutorial

- How to reduce False Positives in Visual Testing?

- How to capture Lazy Loading Images for Visual Regression Testing in Puppeteer

- How to migrate your Visual Testing Project to Percy CLI

- Why is Visual Testing Essential for Enterprises?

- Importance of Screenshot Stabilization in Visual Testing

- Strategies to Optimize Visual Testing

- Best Practices for Visual Testing

- Visual Testing Definitions You Should Know

- Visual Testing To Optimize eCommerce Conversions

- Automate Visual Tests on Browsers without Web Drivers

- Appium Visual Testing: The Essential Guide

- Top 17 Visual Testing Tools