Before getting into the nitty-gritty of designing and running successful software tests, one must be acquainted with the basics. In this piece, we’ll break down the major software testing techniques – ones that must be included in the vast majority of test suites.

What are Software Testing Techniques?

Software testing techniques are methods to check if a software program works properly, meets its goals, and assesses the quality of software.

These techniques include different methods, like manual testing, where testers check the software, and automated testing, where testers use tools to check. Each method helps find bugs, ensure features work, or assess how well the software handles pressure. Using a mix of these methods testers spot issues early, create a better user experience, and ensure the software works as intended.

Common Software Testing Types (with Examples)

Note: For many of these different testing types to yield accurate, actionable results, they must be executed in environments that replicate the production stage as closely as possible. In other words, they need to be tested on real browsers, devices, and operating systems – the ones real people use to access your site or app.

If you don’t have access to an on-premise device lab that is regularly updated with the latest technology, consider giving BrowserStack’s real device cloud a try.

Black Box Testing

In Black Box Testing, a tester uses the software to check that it functions accurately in every situation. However, the test remains completely unaware of the software’s internal design, back-end architecture, components, and business/technical requirements.

The intent of black-box testing is to find performance errors/deficiencies, entire functions missing (if any), initialization bugs, and glitches that may show up when accessing any external database.

Example: You input values to a system that is slotted into different classes/groups, based on the similarity of outcome (how each class/group responds) to the same values. So, you can use one value to test the outcomes of a number of groups/classes. This is called Equivalence Class Partitioning (ECP) and is a black-box testing technique.

White Box Testing

In White Box Testing, testers verify systems they are deeply acquainted with, sometimes even ones they have created themselves. No wonder white box testing has alternate names like open box testing, clear box testing, and transparent box testing.

White box testing is used to analyze systems, especially when running unit, integration, and system tests.

Example: Test cases are written to ensure that every statement in a software’s codebase is tested at least once. This is called Statement Coverage and is a white box testing technique.

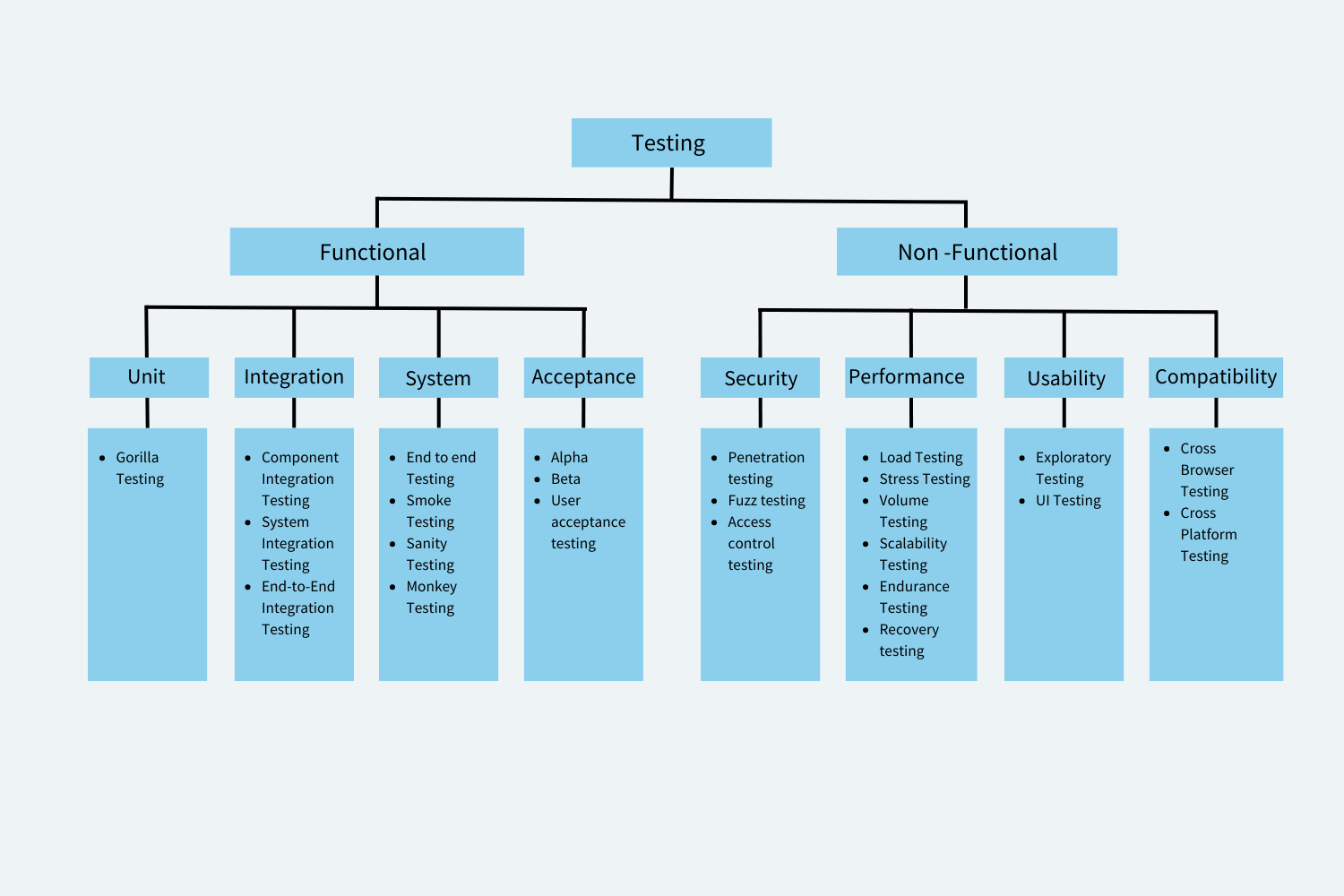

Functional Testing

Functional tests are designed and run to verify every function of a website or app. It checks that each function works in line with expectations set out in corresponding requirements documentation.

Example: Tests are created to check the following scenario – when a user clicks “Buy Now”, does the UI take them directly to the next required page?

There are multiple sub-sets of function testing, the most prominent of which are:

- Unit Testing: Each individual component is tested before the developer pushes it for merging. Unit tests are created and run by the devs themselves.

- Integration Testing: Units are integrated and tested to check that they work together seamlessly.

- System Testing: All systems elements, hardware and software, are tested for overall functioning to check that it works according to the system’s specific requirements. Regression Testing is a type of System Testing that is performed before every release.

- Acceptance Testing: Often called User Acceptance Testing, this sub-set of testing puts the software in the hands of a control group of potential users, and note their feedback. It’s the app’s first test in a truly real-world scenario.

Run Functional Tests on 3500+ real browsers and devices. Start for Free.

Non Functional Testing

It’s in the name. Non functional tests check the non functional attributes of any software – performance, usability, reliability, security, quality, responsiveness, etc. These tests establish software quality and performance in real user conditions.

Example: Tests are created to simulate high user traffic so as to check if a site or app can handle peak traffic hours/days/occasions.

The main sub-sets of non-functional testing are:

- Performance Testing: Software is tested for how efficiently and resiliently it handles increased loads in traffic or user function. Load testing, stress testing, spike testing, and endurance testing are various ways in which software resilience is verified.

- Compatibility Testing: Is your software compatible with different browsers, browser versions, devices (mobile and desktop), operating systems and OS versions? Can a Samsung user play with your app as easily as an iPhone user? Cross Browser Compatibility tests help you check that.

Check your software compatibility with 3000+ real browsers & devices. See how your app working in real user conditions with BrowserStack.

- Security Testing: Conducted from the POV of a hacker/attacker, security tests look for gaps in security mechanisms that could be exploited for data theft or for making unauthorized changes.

- Usability Testing: Usability tests are run to verify if a software can be used without hassle by actual target users. So, you put it in the hands of a few prospective users, in order to get feedback before its actual deployment.

- Visual Testing: Visual tests check if all UI elements are rendering as expected, with the right shape, size, color, font, and placement. The question these tests answer is: Does the software as it was meant to in the requirements?

Percy and App Percy by BrowserStack allows you to run Automated Visual Regression Tests using Visual Diff.

- Accessibility Testing: Accessibility testing evaluates if the software can be used by individuals who are disabled. Its goal is to optimize the apps so that differently-abled users can perform all key actions without external assistance.

- Responsive Testing: Responsive tests verify if the app/site renders well on screen sizes and resolutions offered by different devices, mobile, tablets, desktops, etc. A site’s responsive design is massively important since most people access the internet from their mobile devices, and expect software to work without hassle on their personal endpoints.

Types of Software Testing Techniques

Below are 2 types of Software Testing Techniques:

Static Testing

This technique checks the software’s code or documentation without running it. For example, code reviews allow developers to spot errors before executing the software.

Dynamic Testing

Dynamic testing means running the software to identify any bugs or issues. It tests the application’s behavior and performance through various methods, such as manual and automated testing. For instance, a tester might use automated scripts to verify that a login feature works correctly under different conditions.

Different Software Testing Techniques

Here are the different types of Software Testing Techniques:

- Equivalence Partitioning: In this technique, testers divide input data into valid and invalid groups to minimize the number of test cases while ensuring coverage. For example: For an age input of 1-100, valid partitions are 1-100; invalid ones are <1 or >100.

- Boundary Value Analysis: BVA focuses on testing the limits of input ranges, where errors are more likely to occur. For example, for the age range of 1-100, test values would include 0, 1, 100, and 101.

- Decision Table Testing: This technique uses a table to outline various input combinations and their expected outcomes, ensuring that all scenarios are accounted for. For example, in a discount system, one would test combinations of “Customer Type” (Regular/Premium) and “Purchase Amount” to confirm the correct discounts are applied.

- State Transition Testing: This approach is vital for systems where output depends on the current state, ensuring that state transitions occur as expected. For instance, in a login system, you would test transitions from “Logged Out” to “Logged In” and the handling of “Incorrect Password” scenarios.

- Use Case Testing: This technique evaluates the software against real-world scenarios to ensure it fulfills user requirements. For example, in an e-commerce platform, scenarios like “Add Item to Cart” and “Checkout” would be tested.

- Error Guessing: This method leverages the tester’s intuition to pinpoint areas in the software likely to contain defects. For example, one might focus on input fields that accept user data, anticipating potential issues with special characters.

- All-Pair Testing Technique: This technique tests all possible pairs of input parameters to achieve comprehensive coverage with fewer test cases. For example, if a system has three settings (A, B, C), each with two options (On, Off), you would test all combinations of two settings at a time.

- Cause-Effect Technique: Testers map input causes to their expected output effects to validate all relationships. For example, in a loan application, inputs like “Income” and “Credit Score” influence the “Loan Approval” decision.

- Risk Coverage: This approach prioritizes testing efforts on software areas that present the highest risk of failure. For instance, critical features such as payment processing would be tested more rigorously than less impactful features.

- Statement Coverage: This ensures that every line of code is executed at least once during testing to verify basic functionality. For example, in an order processing function, all lines of code should be tested to ensure they perform as intended.

- Branch Coverage: This method guarantees that all decision points in the code are tested, covering both true and false outcomes. For example, for an if-else statement, you would test both the true and false conditions to validate all pathways.

- Path Coverage: Testers validate that all possible execution paths through the code are exercised during testing to ensure comprehensive coverage. For instance, in a loop, you would test scenarios in which the loop executes and where it exits to cover all logical paths.

Best Practices for Software Testing (no matter the Technique)

Whatever software testing technique your project might require, using them in tandem with a few best practices helps your team push positive results into overdrive. Do the following, and your tests have a better shot of yielding accurate results that provide a solid foundation for making technical and business decisions.

- Use REAL browsers, devices, and OSes to test your software’s real-world efficacy. We’ve written plenty on why emulators and simulators don’t come close to replicating real user conditions.

Follow-up Read: Will the iOS emulator on PC (Windows & Mac) solve your testing requirements?

Replace Android Emulator for PC (Mac & Windows) with Real Devices

These articles reveal why you can never get 100% accurate results with emulators and simulators. You need to run the software on the actual user devices used to access your app. Real device testing is non-negotiable, and the absolute primary best practice you should optimize your tests for.

- Prioritize test planning. You’ll always encounter surprises in the SDLC, but as far as possible, create a formal plan with inputs from all stakeholders that serves as a clear roadmap for testing. Clear documentation is essential to prevent miscommunication. Ensure that the plan is specific, measurable, achievable, relevant, and time-bound.

- Start testing as early as possible. Use Shift Left Testing – pushing tests to earlier stages in the pipeline. This lets you identify and resolve bugs as early as possible in the development process, which improves software quality and reduces time spent resolving issues.

- Use automation as widely as possible. Automation reduces the time required to execute tests, as well as the effects of human error. Automation engines don’t get tired, and rarely make mistakes. If you can afford to make the upfront investment (hiring the right testers, purchasing the right tools), you’ll find automation testing paying you back 10X in terms of software quality and business ROI.

Read More: How to Create Test Cases for Automated tests

- Establish the right QA metrics to accurately evaluate your test suites. The best QA engine in the world will mean nothing if you don’t establish benchmarks to declare success or failure.

Read More: Essential Metrics for the QA Process

- Create test cases that test one feature each. If tests are isolated and independent, they are easier to reuse and maintain.

- Keep a close eye on test coverage. Each test suite should be able to cover significant swathes of the software under test so that you don’t have to keep building and running new tests with every iteration. The idea is to achieve maximum test coverage.

Conclusion

If you’re googling “testing techniques types”, “testing techniques in manual testing” or “test design techniques with examples”, start here. You’ll get your one or two-line introductions, and then move on to relevant, linked articles that dive deeper into each individual technique.

Once you’re acquainted with the basics, why not test your testing chops on real browsers & devices…for free? Try your hand at BrowserStack Test University, a free resource that offers access to real devices for a hands-on learning experience. Sign up for free, and master the fundamentals of software testing with Fine-grained, practical, and self-paced online courses. ALL FOR FREE.