Our Breakpoint 2026 speaker spotlight series continues with Brittany Stewart, Senior QA Specialist and Engineer at QualityWorks Consulting Group. Brittany brings a perspective you don't often see in this space: she came into testing through design, and that crossover shapes everything about how she works.

She's spoken at TestBash World, SauceCon, and Agile Testing Days 2025 (where her session ranked in the top 10 rated talks), and has documented what AI-augmented workflows actually look like in practice on her blog at brittanystewart.tech. At Breakpoint 2026, she's presenting "Centaur Mode: A QA Engineer's Honest AI Playbook."

You came into QA from a creative and design background, which is not the usual path. How has that shaped the way you approach testing?

Design trained me to care about the person on the other side of the screen first. So when I look at a test, I'm not just asking "does this pass?" I'm asking "does this actually protect the user's experience?" That shift matters more now that AI is in the workflow.

Right now I'm deep in test automation for a large enterprise application, building out an AI-augmented workflow that covers test scenario generation, smart test healing, and scoring the quality of AI output. I'm not trying to automate everything. I'm trying to make the repetitive work faster so human judgment stays sharp where it actually matters.

Can you give us a sneak peek into your Breakpoint session? What's the one thing you want every attendee to walk away with?

My session is called "Centaur Mode: A QA Engineer's Honest AI Playbook." I built this framework because I kept watching QA folks fall into one of two traps with AI. Either they refused to touch it and got left behind, or they handed everything over and started shipping whatever the model generated. Neither works. What I want people to understand is that collaborating with AI is a skill on its own, and it's one QA engineers can actually get good at.

Centaur Mode is the middle path. Picture a centaur. Human on top, horse on the bottom, working together, but the human is steering. That's the mental model I want people to leave with. AI can be your advantage instead of your replacement, but only if you stay in control of the judgment calls. You don't need to be a prompt engineer to pull this off. You need a repeatable process, clear handoffs, and the discipline to review every output.

What's a belief you had about AI in testing a year ago that you've since changed your mind on?

A year ago I thought the goal of AI in testing was to get it to do more of the work. More generation, more coverage, more output. I spent a lot of energy there, and honestly, that was the easy part.

What I didn't see coming was the harder part. Knowing when to trust the output and when not to. And the mental capacity it takes to properly evaluate what AI gives you back. That's a different job than what QA has traditionally done, and I don't think we talk about it enough.

AI does get better the more you use it. The tests start matching your patterns, the structure looks right, but every now and then it will inject something that's not actually relevant to what you asked for. You have to catch it. Generation is fast and cheap for everyone now. Judgment and evaluation are the real job, and that takes mind power, experience, and knowledge. As humans in this loop, we have to protect that part.

"Centaur Mode" is a pretty memorable framework. Where does the human absolutely have to stay in control, and where is it actually okay to let AI take the wheel?

The human has to own intent and acceptance. What are we testing, why does it matter, and is this output actually correct and meeting business needs? Those three questions belong to you. Hand them off and you're not doing QA anymore — you're just shipping whatever the model felt like generating that day.

Where I let AI take the wheel is the in-between work. Drafting a first pass of scenarios from a ticket. Setting up the starter structure for feature files and step definitions. Self-healing a selector that drifted. Summarizing a failed run and explaining root cause. The repetitive, pattern-heavy stuff where a fast draft beats a blank page. But every handoff back to me goes through a quality check. That's the whole point of Centaur Mode.

You're based in Jamaica, you run a design studio, and you work in one of the fastest-moving corners of tech. What does "switching off" actually look like for you?

It looks like being present with my husband and my son. My son is in Grade 3 and he does not care about my test suite, but he's very into video games, crafts, and tech. So we play games together and explore building our own games. We also take trips and spend time in nature whenever we can. "Touch some grass," as my son would say. That's grounding in the best way.

I still make time for the design and marketing work I love too. Switching off for me isn't really about doing nothing. It's about building something that matters outside of work.

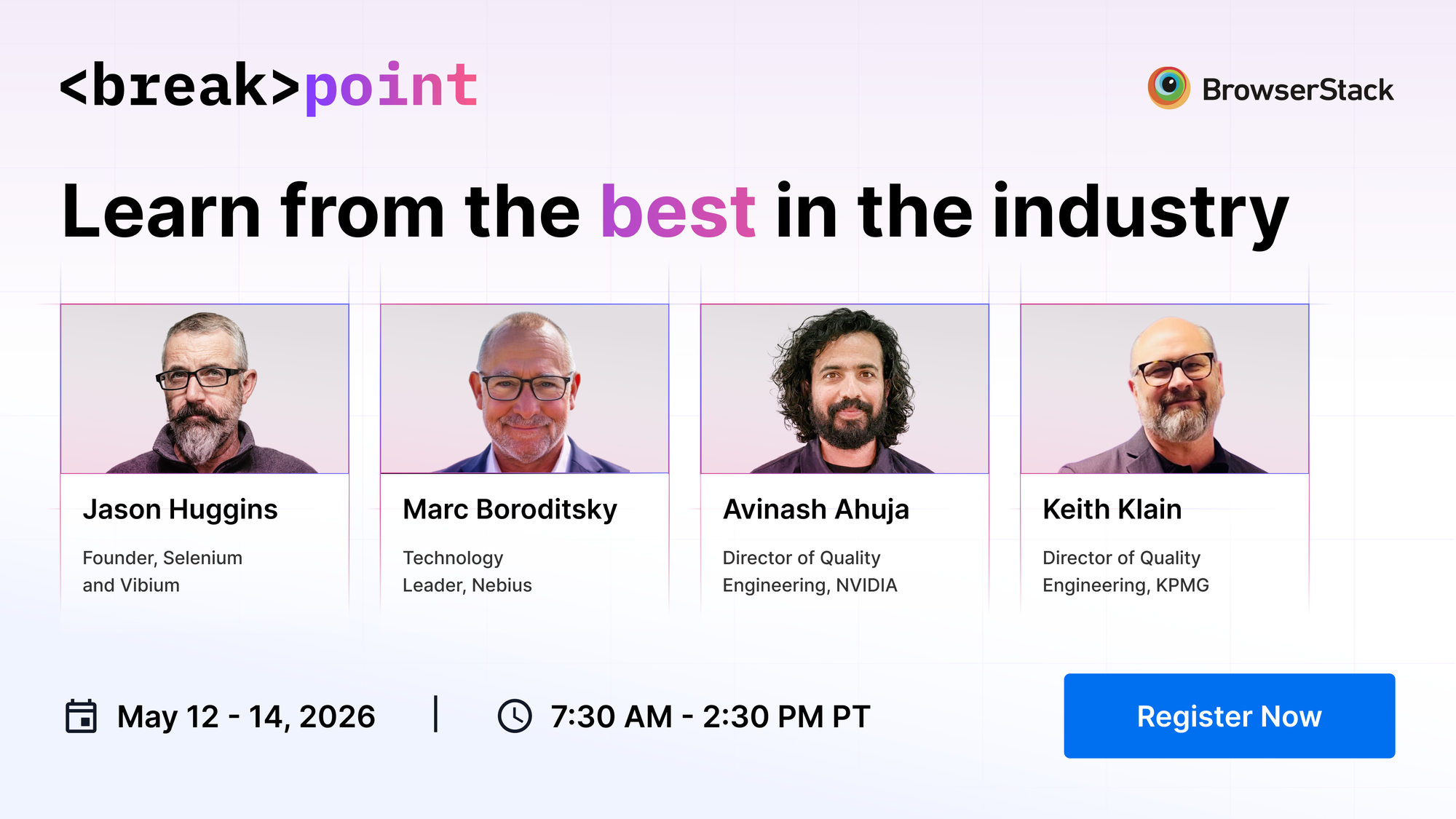

Brittany is one of several practitioners bringing grounded, real-world perspectives to Breakpoint 2026.

She'll be joined by Jason Huggins (Founder, Selenium and Vibium), Marc Boroditsky (Technology Leader, Nebius), Avinash Ahuja (Manager Solution Architect, NVIDIA), Keith Klain (Director of Quality Engineering, KPMG), Lena Nyström (CEO, Test Scouts AB), and more. It's a lineup built for practitioners who want signal over noise. Register here to catch her session.