Software testing is an important part of the software development process. It ensures that the application works as intended, meets user expectations, and is defect-free.

Overview

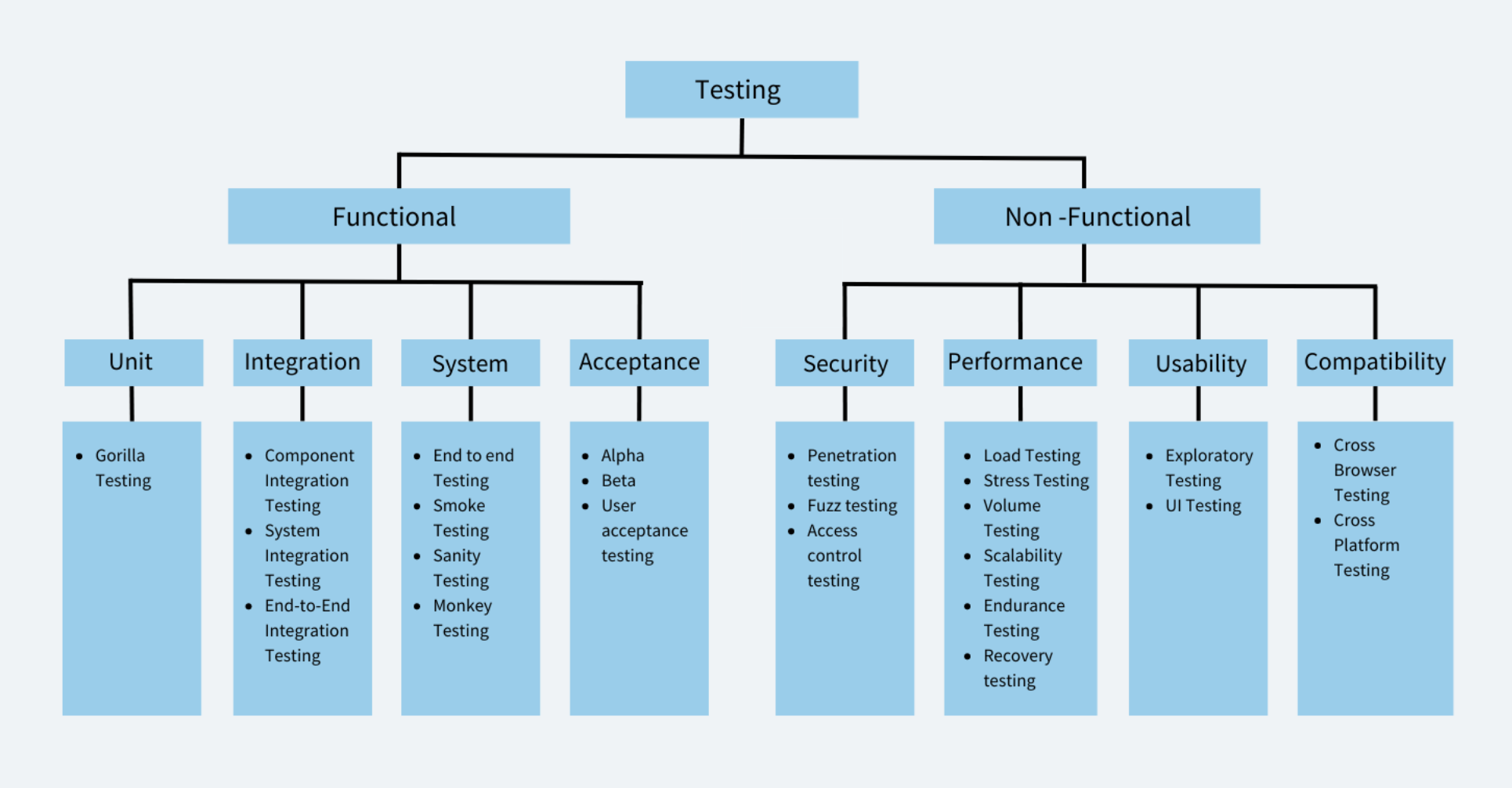

Classification of Different Types of Software Testing

- Functional Testing

- Non-Functional Testing

Different Types of Software Testing

- Unit Testing

- Integration Testing

- System Testing

- Acceptance Testing

- Security Testing

- Performance Testing

- Usability Testing

- Compatibility Testing

- Cross-browser Testing

- Regression Testing

- Recovery Testing

- API Testing

- Stress Testing

- Load Testing

- Exploratory Testing

This article covers the basics of software testing, its various types, techniques, and the role of manual and automated testing.

What is Software Testing?

Software testing is done to evaluate a software application or system to identify defects, errors, or potential issues before it is released to the end-users. The primary goal of software testing is to ensure that the software meets the specified requirements, is functional, reliable, and performs as expected.

There are various software testing types for manual testing and automated testing, and different testing methodologies such as black-box testing, white-box testing, and gray-box testing. During the testing process, testers may use various testing types such as functional testing, performance testing, security testing, and usability testing.

Software testing helps improve the overall quality of the software product, reduce development costs, and prevent potential issues that could arise after the software is released to users.

Principles of Software Testing

Here are the key principles of software testing that guide effective and reliable test practices:

- Testing Shows the Presence of Defects:The goal of testing is to find bugs in the software, not to prove that there are none. Even after extensive testing, some bugs may still remain undetected.

- Exhaustive Testing is Impossible: It is not practical to test every possible input or scenario in an app. Instead, testing should be focused on the most important and high-risk areas.

- Early Testing Saves Time and Cost: Detecting and fixing defects in the early stages of development is more efficient and less expensive.

- Defect Clustering: A small number of modules usually contain most of the defects. Prioritizing testing in these areas increases the chances of finding critical bugs.

- Pesticide Paradox:Repeating the same tests will eventually stop revealing new defects. To find fresh issues, test cases must be regularly reviewed and updated.

- Testing is Context-Dependent: Different software projects require different testing approaches based on their goals, risks and users. There is no single testing approach that works for every project.

- Absence of Errors Fallacy: Bug-free software is not necessarily successful if it fails to meet user expectations. Testing should ensure the software meets both functional and business requirements.

Read More: Design Thinking in Software Testing

Classification of Different Types of Software Testing

Testing is divided into two types –

- Functional Testing

- Non Functional Testing

Functional Testing

Functional testing focuses on verifying the functionality of the software system. It is a type of testing that is done to ensure that the system works as intended and meets the functional requirements specified by the stakeholders. Functional testing is concerned with what the software system does, and how it performs its functions. Some examples of functional testing include unit testing, integration testing, and acceptance testing.

Also Read: What is Automated Functional Testing

Example of Functional Testing:

Let’s say you’re testing a login page of a website. The functional requirements of this login page may include:

- The user should be able to enter their username and password.

- The system should authenticate the user’s credentials and grant access if the credentials are correct.

- The system should deny access if the user’s credentials are incorrect.

To test the functionality of this login page, you may perform the following functional tests:

- Enter valid username and password and verify that the user is able to successfully login.

- Enter invalid username and password and verify that the user is not able to login and an appropriate error message is displayed.

- Verify that the login page displays correctly on different browsers and devices.

- Verify that the password field is secure and does not display the entered password.

- Verify that the “Forgot Password” feature works correctly and allows users to reset their passwords.

Read More: Debunking Myths about Functional Testing

Non Functional Testing

Non-functional testing focuses on evaluating the non-functional aspects of the software system. This type of testing includes testing for performance, usability, reliability, scalability, and security. Non-functional testing is concerned with how well the software system performs its functions, rather than what it does. Some examples of non-functional testing include load testing, stress testing, usability testing, and security testing.

Example of Non functional Testing:

Let’s say you’re testing the performance of a website. The non-functional requirements of this website may include:

- The website should be able to handle a certain number of concurrent users.

- The website should load pages within a certain amount of time.

- The website should be responsive and display correctly on different devices and screen sizes.

- Accessible for users who are differently abled.

- The website should be secure and protect user data.

Types of Functional Testing

Here are different types of Functional Testing:

- Unit Testing

- Integration Testing

- System Testing

- Acceptance Testing

1. Unit Testing

Unit testing is a software testing type in which individual units/components are tested in isolation from the rest of the system to ensure that they work as intended. A unit refers to the smallest testable part of a software application that performs a specific function or behavior. A unit can be a method, a function, a class, or even a module. They can be run in isolation or in groups. Unit tests are typically written by developers to check the correctness of their code and ensure that it meets the requirements and specifications.

Example of Unit Testing:

A developer has scripted a password input text field with its validation ar (8 characters long, must contain special characters.); makes a unit test to test out this one specific text field (has a test that only inputs 7 characters, no special characters, empty field)

Read More: Best Practices for Unit Testing

Advantages of Unit Testing

- Early detection of Bugs

- Simplifies Debugging Process

- Encourages Code Reusability

- Improves Code Quality

- Enables Continuous Integration

Types of Unit Testing

a. Gorilla Testing

Gorilla testing is a software testing technique where the tester performs testing of a particular module or component of the software system rigorously and extensively to identify any issues or bugs that may arise. In other words, Gorilla testing focuses on testing a single module or component in depth to ensure that it can handle high loads and perform optimally under extreme conditions.

Example of Gorilla Testing

Testing a particular unit/module extensively to ensure that it handles heavy load.

Advantages of Gorilla Testing

- Identify potential bottlenecks or weaknesses in a particular module

- Capable of handling high loads

- Helps identify issues or bugs that may be missed by other testing techniques

2. Integration Testing

Integration testing is a testing type in which different modules or components of a software application are tested together as a group to ensure that they work as intended and are integrated correctly. The main aim of integration tests is to identify issues that might come up when multiple components work together. It ensures that individual code units/ pieces can work as a whole cohesively.

Read More: Unit Test vs Integration Test

Integration testing can be further broken down to:

- Component Integration Testing: This type of testing focuses on testing the interactions between individual components or modules.

- System Integration Testing: This type of testing focuses on testing the interactions between different subsystems or layers of the software application.

- End-to-End Integration Testing: This type of integration testing focuses on testing the interactions between the entire software application and any external systems it depends on.

Example of Integration Tests: A software application consists of a web-based front-end, a middleware layer that processes data, and a back-end database that stores data. Integration tests would verify if the data submitted in the front end is processed by the middleware and then stored by the backend database.

Advantages of Integration testing:

- Early Detection of Issues

- Improved Software Quality

- Increased Confidence in the Software

- Reduced Risk of Bugs in Production

- Better Collaboration Among Team Members

- More Accurate Estimation of Project Timelines

3. System Testing

System testing is a testing type that tests the entire software application as a whole and ensures that the software meets its functional and non-functional requirements. System testing is typically performed after integration testing. During system testing, testers evaluate the software application’s behavior in various scenarios and under different conditions, including normal and abnormal usage, to ensure that it can handle different situations effectively.

Example of System Testing

A software application consists of a web-based front-end, a middleware layer that processes data, and a back-end database that stores data. The system test for this scenario would involve the following steps:

- The user accesses the front-end interface and submits an order, including item details and shipping information.

- The middleware layer receives the order and processes it, including verifying that the order is valid and the inventory is available.

- The middleware layer sends the order information to the back-end database, which stores the information and sends a confirmation message back to the middleware layer.

- The middleware layer receives the confirmation message and sends a response back to the front-end indicating that the order has been successfully processed.

Advantages of System Testing

- Identifies and resolves defects or issues that may have been missed during earlier stages of testing.

- Evaluates the software application’s overall quality, including its reliability, maintainability, and scalability.

- Increases user satisfaction

- Reduces risk

Types of System Testing

a. End to End Testing

End-to-end testing is a testing methodology that tests the entire software system from start to finish, simulating a real-world user scenario. The goal of end-to-end testing is to ensure that all the components work together seamlessly and meet the desired business requirements. Most often people use the term system testing and end to end testing interchangeably. However both of them are different types of testing.

System testing is a type of testing that verifies the entire system or software application is working correctly as a whole. This type of testing includes testing all the modules, components, and integrations of the software system to ensure that they are working together correctly. The focus of system testing is to check the system’s behavior as a whole and verify that it meets the business requirements.

End-to-end testing, on the other hand, is a type of testing that verifies the entire software application from start to finish, including all the systems, components, and integrations involved in the application’s workflow. The focus of E2E testing is on the business processes and user scenarios to ensure that they are working correctly and meet the user requirements.

Example of End to End Testing

E-commerce transaction: End-to-end testing of an e-commerce website involves testing the entire user journey, from product selection to payment, shipping, and order confirmation.

Advantages of End to End testing

- Allows you to test real world scenarios

- Comprehensive testing

- Improves quality

b. Monkey Testing

Monkey testing is a testing type where the tester tests in a random manner with random inputs to analyze if the application breaks. The objective of monkey testing is to verify if an application crashes by giving random input values. There are no special test cases written for monkey testing.

Also Read: Monkey Testing with WebdriverIO

Example of Monkey Testing

A tester randomly turning off the power or unplugs the system to test the application’s ability to recover from sudden power failures.

Advantages of Monkey Testing

- Does not require extensive knowledge

- Ensures reliability

- Used to identify bugs that cannot be discovered through traditional methods

- Cost Effective

Difference between Monkey Testing and Gorilla Testing: Monkey testing and gorilla testing are not the same, although they both involve sending random input data to the software system to observe its behavior. Monkey testing is focused on finding defects related to unexpected or invalid input, while gorilla testing is focused on thoroughly testing a specific feature or functionality of the software system.

c. Smoke Testing

Smoke testing is a testing type that is conducted to ensure that the basic and essential functionalities of an application or system are working as expected before moving on to more in-depth testing.

Example of Smoke Testing

Smoke testing for login will check whether the login screen is accessible and if the users can log in.

Advantages of Smoke Testing

- Quick Feedback

- Early detection of defects

Also Read: Sanity Testing vs Smoke Testing

4. Acceptance Testing

Acceptance Testing verifies whether a software application meets the specified acceptance criteria and is ready for deployment. It is usually performed by end-users or stakeholders to ensure that the software meets their requirements and is fit for purpose.

Acceptance Testing can be further divided into two types: User Acceptance Testing (UAT) and Business Acceptance Testing (BAT). User Acceptance Testing is performed by end-users to validate that the software meets their needs and is easy to use. Business Acceptance Testing is performed by stakeholders to ensure the alignment of business/functional requirements with the organization’s objectives.

Example of Acceptance Testing

Conducting tests to meet if an app meets the requirements of the user. For a banking app, acceptance testing would involve testing the app for login, account management, fund transfer, statement download, card payment etc.

Advantages of Acceptance Testing

- Increased stakeholder engagement

- Reduced Risk

- Reduced costs

Types of Acceptance Testing

a. Alpha Testing

Alpha testing is a type of testing that is performed in-house by the development team or a small group of users. It is the first phase of testing that is conducted before the software is released to the public or external users. Alpha testing is a crucial step in the software development process as it helps to identify bugs, defects, and usability issues before the product is released.

Example of Alpha Testing

A game development company is creating a new game. The development team performs alpha testing by testing the game’s performance, such as loading times, graphics, sound effects, and gameplay.

Advantages of Alpha Testing

- Early detection of issues

- Enhanced user experience

- Feedback from internal users

b. Beta Testing

Beta testing is a type of testing that is performed by a group of external users who are not a part of the development team. The purpose of beta testing is to gather feedback from real users and to identify any issues that were not found during the alpha testing phase.

Example of Beta Testing

A software company is releasing a new feature of its product. The company invites a group of external users to beta test the product and provide feedback on any bugs, defects, or issues that were not found during the alpha testing phase.

Advantages of Beta Testing

- Real-world feedback

- Marketing and promotion

- Enhanced user experience

c. User Acceptance Testing

User acceptance testing is a type of acceptance testing that is performed by the end-users of the software system. The focus of UAT is to validate the software system from a user’s perspective and to ensure that it meets their needs and requirements. UAT is typically performed at the end of the software development lifecycle.

Example of User Acceptance Testing

A company asks a batch of its customers to test the website and provide feedback on its functionality, usability, and overall user experience. Based on the feedback, it makes the necessary changes and improvements to the website.

Advantages of User Acceptance Testing

- Reduced development costs

- Improved customer satisfaction

d. Sanity Testing

Sanity testing is a testing type that is performed to quickly determine if a particular functionality or a small section of the software is working as expected after making minor changes. The main objective of sanity testing is to ensure the stability of the software system and to check whether the software is ready for more comprehensive testing.

Example of Sanity Testing

After fixing a bug that caused a crash in a mobile application, you can perform sanity testing by opening the app and ensuring that it does not crash anymore

Advantages of Sanity Testing

- Quick and efficient

- Saves time and effort

- Cost-effective

Types of Non Functional Testing

Here are different types of Non Functional Testing:

- Security Testing

- Performance Testing

- Usability Testing

- Compatibility Testing

1. Security Testing

Security testing is a type of software testing that assesses the security of a software application. It helps to identify vulnerabilities and weaknesses in the system and ensure that sensitive data is protected.

Examples of security testing include penetration testing, vulnerability scanning, and authentication testing.

Advantages of Security Testing

- Improved system security

- Protection of sensitive data

- Compliance with regulations

Types of Security Testing

- Penetration testing: This involves attempting to exploit potential vulnerabilities in the software system by simulating an attack from a hacker or other malicious actor.

- Fuzz testing: This involves sending many unexpected or malformed input data to the software system to identify potential vulnerabilities related to input validation and handling.

- Access control testing: This involves testing the software system’s access control mechanisms inorder to make sure that the access to sensitive data is granted only authorized users.

2. Performance Testing

Performance testing is a type of software testing that assesses the performance and response time of a software application under different workloads. It helps to identify bottlenecks in the system and improve the performance of the application.

Examples of performance testing include load testing, stress testing, and volume testing.

Advantages of Performance Testing

- Increased customer satisfaction

- Better scalability

- Improved user experience

Types of Performance Testing

a. Load Testing

Load testing is a type of performance testing that assesses the performance and response time of a software application under a specific workload. It helps to identify the maximum capacity of the system and ensure that it can handle the expected user load.

Examples of Load Testing

Simulating multiple users accessing a website at the same time or performing multiple transactions on a database.

Advantages of Load Testing

- Improved system reliability

- Better scalability

b. Stress Testing

Stress testing is a type of performance testing that assesses the performance and response time of a software application under extreme workloads. It helps to identify the system’s breaking point and ensure that it can handle unexpected workloads.

Examples of Stress Testing

Simulating thousands of users accessing a website simultaneously or performing millions of transactions on a database.

Advantages of Stress Testing

- Improved system reliability

- Better preparedness for real-world scenarios

- Better scalability

c. Volume Testing

Volume testing is a type of testing that assesses the performance and response time of a software application under a specific volume of data. It helps to identify the system’s capacity to handle large volumes of data.

Examples of Volume Testing

Inserting large amounts of data into a database or generating large amounts of traffic to a website.

Advantages of Volume Testing

- Improved system reliability

- Better scalability

d. Scalability Testing

Scalability testing evaluates the software’s ability to handle increasing workload and scale up or down in response to changing user demands. It involves testing the software system under a range of different load conditions to determine how it performs and whether it can handle increasing levels of traffic, data, or transactions.

Examples of Scalability Testing

Testing a website by gradually increasing the number of simulated users accessing the website and tracking how the system responds to it.

Advantages of Scalability Testing

- Optimize system performance

- Better scalability

e. Endurance Testing

The goal of endurance testing is to identify how well a software system can handle a workload over an extended period of time without any degradation in performance or stability. It involves simulating a normal or average workload or traffic scenario over a period of a few weeks to months.

Examples of Endurance Testing

Testing a website for performance with normal or average user traffic over an extended period.

Advantages of Endurance Testing

- Identifies long-term performance issues

- Reduces downtime

- Enhances user experience

3. Usability Testing

Usability testing is focused on evaluating the user interface and overall user experience of a software application or system. It involves testing the software with real users to assess its ease of use, learnability, efficiency, and overall user satisfaction.

Types of Usability Testing

a. Exploratory Testing

Exploratory Testing is a software testing type that is unscripted, meaning that the tester does not follow a pre-defined test plan or test case. Instead, the tester relies on their own expertise, intuition, and creativity to explore the software and find defects.

Example of Exploratory Testing

A tester testing for different actions, such as tapping different buttons, swiping screens, and inputting different types of data . The tester might look for crashes, freezes, errors, and unexpected behaviors throughout the exploration.

Advantages of End to End testing

- Exploratory testing allows testers to be more flexible.

- Exploratory testing can often be more time-efficient

- Used to test real world scenarios

b. User interface Testing (UI Testing)

UI Testing (User interface testing) is a type of software testing that focuses on testing the graphical user interface (GUI) of an application. The purpose of user interface testing is to ensure that the application’s GUI is functioning correctly and meets the requirements and expectations of end-user

Examples of User Interface Testing

Identifying visual bugs in the layout, design, color scheme, font size, and placement of buttons.

Advantages of User Interface Testing

- Identifying visual bugs

- Reduced development costs

- Increased Productivity

- Increased Usability

c. Accessibility Testing

Accessibility testing is a type of testing that is focused on evaluating the accessibility of a software application or system for users with disabilities. It involves testing the software with assistive technologies, such as screen readers or voice recognition software, to make sure that differently abled users are able to access and use the software application effectively.

Examples of Accessibility Testing

Testing a website with a screen reader to ensure that the website is compatible with screen readers and its content is accessible via text-to-speech.

Advantages of Accessibility Testing

- Improved User Experience

- Better Credibility

4. Compatibility Testing

Compatibility testing evaluates the compatibility of a software application or system with different hardware, software, operating systems, browsers, and other devices or components.

Types of Compatibility Testing

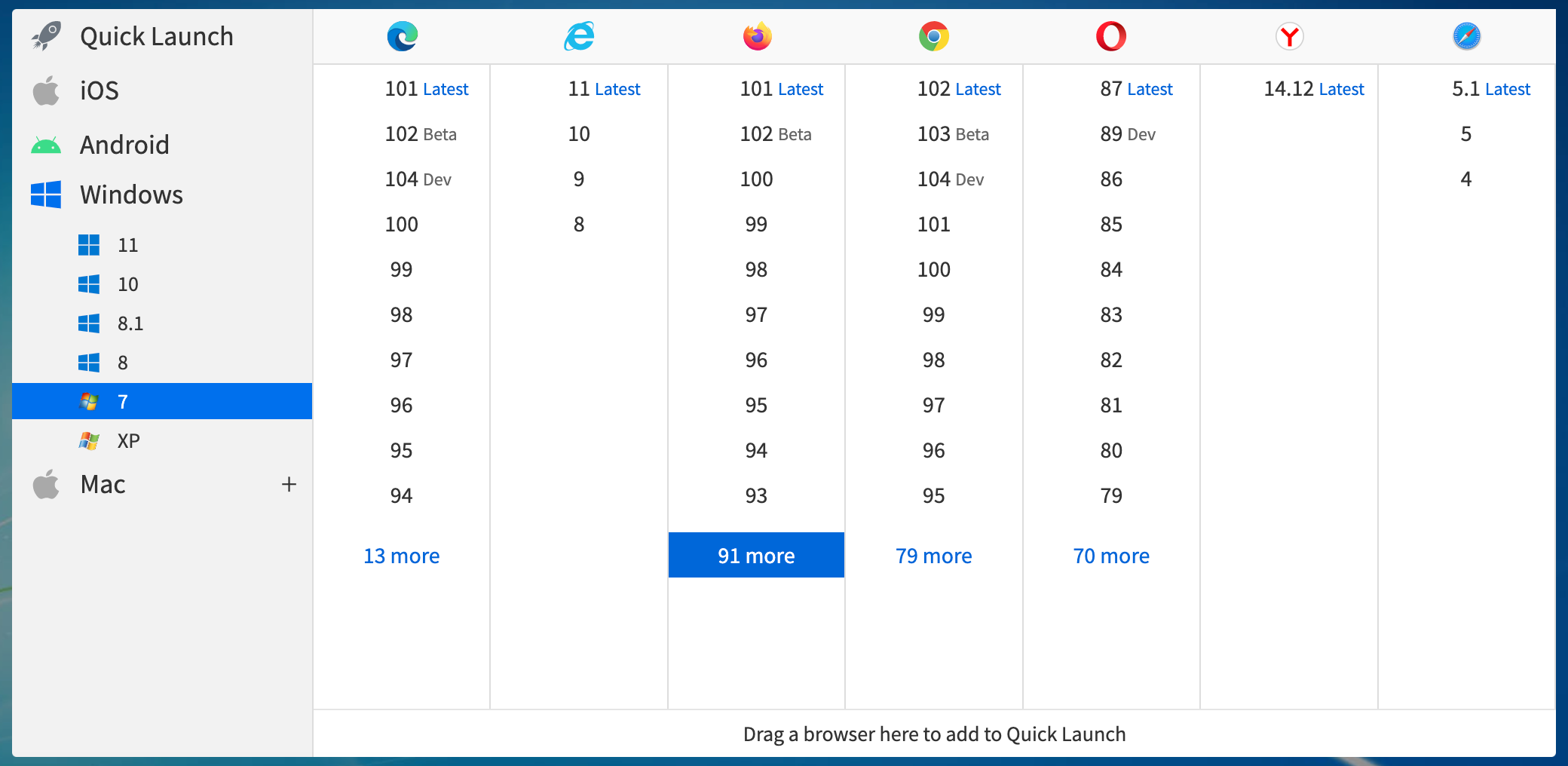

a. Cross Browser Testing

Cross browser testing is a type of software testing that ensures a web application or website works correctly across multiple browsers, operating systems, and devices. It involves testing the website’s functionality, performance, and user interface on different web browsers such as Google Chrome, Mozilla Firefox, Microsoft Edge, Safari, and Opera, among others.

Examples of Cross Browser Testing

A tester testing on different versions of Google Chrome to identify issues that might arise in a particular version or a tester testing on different browsers to identify issues particular to a browser.

Read more: How to choose a Cross Browser Testing Tool

Advantages of Cross Browser Testing

- Increased customer satisfaction

- Enhanced brand reputation

- Early detection of issues

- Improved website traffic and conversion

b. Cross platform Testing

Cross platform testing is a testing type that ensures that an application or software system works correctly across different platforms, operating systems, and devices. It involves testing the application’s functionality, performance, and user interface on different platforms such as Windows, macOS, Linux, Android, iOS, and others.

Examples of Cross Platform Testing

A software company is developing a new accounting software system. The company performs cross-platform testing to ensure that the software works correctly on different operating systems such as Windows, macOS, and Linux.

Advantages of Cross Platform Testing

- Improved software quality

- Competitive Advantage

- Improved market reach

Other Types of Software Testing

Here are some other types of testing listed below:

- Regression Testing: Regression Testing is a software testing type that ensures that changes or modifications to an existing software application do not introduce new defects or negatively impact existing functionality.

- Recovery Testing: Recovery testing is a type of software testing that evaluates the system’s ability to recover from failures, errors, and other disruptions.

- API Testing: API testing is the process of testing the functionality, reliability, performance, and security of an application programming interface (API). An API consists of protocols, routines, and tools for building software applications.

- Active Testing: This type of testing involves executing test cases with a specific purpose and expected outcome.

- Agile Testing: It is a software testing approach that follows the principles and rules of Agile software development.

- Ad-hoc Testing: This type of testing is performed without any predefined test plan or test case.

- Benchmark Testing: This type of testing involves comparing the performance of the software system against established benchmarks or industry standards.

- Branch Testing: This type of testing is done to ensure that all the branches of the code are tested thoroughly.

- Code-driven Testing: This type of testing involves writing test cases in the same programming language as the code being tested. It helps in finding defects at an early stage of development.

- Context Driven Testing: Context-driven testing is a type of software testing used before launching in the market to test it on all the parameters, including performance, UI, speed, functionalities, and other aspects of the software to identify and fix bugs.

- Dynamic Testing: This type of testing involves you have to give input and get output as per the expectation through executing a test case

- Data Driven Testing: In this type of testing, testers use different data sets to validate the software system’s behavior under different conditions.

- GUI Testing: This type of testing focuses on validating the graphical user interface of the software system. Testers verify that the user interface is intuitive and easy to use.

- Localization Testing: This type of testing ensures that the software system can be easily adapted to different demographics. Language, currency etc are tested here.

- Keyword-driven Testing: This type of testing involves using keywords to define the test cases. Testers use predefined keywords to create test scripts that are executed by an automated testing tool.

- Parallel Testing: This type of testing involves executing the same test cases on multiple systems simultaneously. It helps in identifying performance issues and defects that are specific to certain configurations.

- Path Testing: This type of testing involves testing all possible paths through the code to ensure that each path has been executed, and all the expected outcomes are met.

- Retesting: Retesting is when a test is carried out again on a specific feature that was known to not be functional during the previous test in order to check for its functionality.

- Static Testing: This type of testing involves analyzing the code and other artifacts without executing them. Testers review the code, documentation, and other artifacts to identify defects and potential issues.

Also Read: Static Testing vs Dynamic Testing

Popular Types of Testing: A Quick Comparison

Software testing comes in many forms, each with its own goal in the development process. Here is a quick comparison of the most commonly used testing types, their purpose, how they are done, and the tools used:

| Testing Type Name | Purpose | Manual / Automated | Tools Used |

|---|---|---|---|

| Unit Testing | To test individual components or functions in isolation. | Automated | JUnit, NUnit, TestNG |

| Integration Testing | To test the interaction between integrated units/modules. | Both | JUnit, Postman, SoapUI |

| System Testing | To verify the complete and integrated system. | Both | Selenium |

| Smoke Testing | To check basic functionality after a build. | Both | Selenium, Jenkins |

| Sanity Testing | To verify specific functionality after changes. | Manual | No specific tools; browser or app-based |

| Regression Testing | To ensure new changes haven’t affected existing features. | Both | Selenium |

| Performance Testing | To evaluate speed, scalability, and stability. | Automated | JMeter, LoadRunner |

| Load Testing | To measure system behavior under expected load. | Automated | JMeter, BlazeMeter |

| Stress Testing | To assess system performance under extreme conditions. | Automated | JMeter, LoadRunner |

| Usability Testing | To evaluate user-friendliness and UX. | Manual | Hotjar |

| Security Testing | To identify vulnerabilities and threats. | Both | OWASP ZAP, Burp Suite |

| Acceptance Testing | To validate software against business requirements. | Manual | Cucumber |

| Cross-Browser Testing | To ensure app works across different browsers/devices. | Both | BrowserStack |

| Compatibility Testing | To check software compatibility with OS, hardware, etc. | Manual | BrowserStack |

| Exploratory Testing | To discover issues through informal, unscripted testing. | Manual | Session-based tools, |

Manual Testing

Manual testing is the process of testing software manually without the use of automation tools. In manual testing, testers execute test cases by following predefined steps to identify defects and verify that the application behaves as expected.

Manual testing is often used when human observation is needed, such as in user interface, usability, or exploratory testing. It is ideal for test cases that require flexibility or are not easily automated.

For example, black box testing, which focuses on verifying outputs without looking at the internal code, is commonly done manually. It is also helpful during the early stages of development when the application is still under development and automation is not yet important.

Main Benefits of Manual Testing:

- Easy to start and does not require programming skills.

- Enables real-time feedback and human judgment.

- Useful for testing visual elements, user experience, and exploratory scenarios.

- More effective for short-term projects or one-time test cases.

- More adaptable to sudden changes in test cases or requirements without needing script updates.

Read More: Top 15 Manual Testing Tools

Automated Testing

Automated testing involves using software tools to run tests automatically, compare actual outcomes with expected results, and report defects without manual intervention.

Automated testing is best suited for repetitive and large-scale testing tasks where manual execution would be time-consuming. It helps increase test coverage, speed up the testing process, and reduce human error.

Common examples include unit testing, where individual pieces of code are tested in isolation and regression testing, which ensures that existing functionality is not broken by new updates. Tools like Selenium, JUnit, TestNG and BrowserStack Automate etc. are widely used for automation.

Main Benefits of Automated Testing:

- Saves time and effort for large or recurring test cases.

- Increases accuracy and reduces human error.

- Enhances test coverage by allowing execution across different environments and data sets.

- Enables continuous testing and integration in CI/CD pipelines.

- Supports parallel test execution, enabling tests to run simultaneously across multiple browsers, devices, or environments.

Manual Testing vs Automated Testing

It is essential to map out which test cases will be manually tested and which parts will be done via automated testing, irrespective of the testing type that you choose. Read this interesting article on manual vs. automated testing to understand the difference between the two.

| Criteria | Manual Testing | Automation Testing |

|---|---|---|

| Accuracy | Manual Testing shows lower accuracy due to the higher possibility of human errors. | Automation Testing depicts a higher accuracy due to computer-based testing eliminating the chances of errors. |

| Testing at Scale | Manual Testing needs time when testing is needed at a large scale. | Automation Testing easily performs testing at a large scale with the utmost efficiency. |

| Turnaround time | Manual Testing takes more time to complete a testing cycle, and thus the turnaround time is higher. | Automation Testing completes a testing cycle within record time; thus, the turnaround time is much lower. |

| Cost Efficiency | Manual Testing requires more cost as it involves hiring expert professionals. | Automation Testing saves costs incurred as once the software infrastructure is integrated; it works for a long time. |

| User Experience | Manual Testing ensures a high-end User Experience to the software’s end user, as it requires human observation and cognitive abilities. | Automation Testing cannot guarantee a good User Experience since the machine lacks human observation and cognitive abilities. |

| Areas of Specialization | To exhibit the best results, manual Testing should be used to perform Exploratory, Usability, and Ad-hoc Testing. | Automation Testing should be used to perform Regression Testing, Load Testing, Performance Testing, and Repeated Execution for best results. |

| User Skills | Users must be able to mimic user behavior and build test plans to cover all the scenarios. | Users must be highly skilled at programming and scripting to build test cases and automate as many scenarios as possible. |

Both manual and automation testing have their own advantages and disadvantages. It is essential to choose the appropriate testing approach based on the project’s requirements, time frame, budget, and complexity. Sometimes a combination of both manual and automation testing can be used to achieve the best results.

What are the Software Testing Techniques and How are They Different from Testing Types?

Software testing techniques are specific methods or approaches used to design test cases and evaluate software behavior. They help testers to decide how to test a feature, what data to use and what outcomes to expect. These techniques are broadly categorized into Black Box, White Box, and Gray Box testing.

- Black Box Testing is a software testing technique where the internal workings or code structure of the system being tested are not known to the tester.

- White Box Testing focuses on the software’s internal logic, structure, and coding. It provides testers with complete application knowledge, including access to source code and design documents, enabling them to inspect and verify the software’s inner workings, infrastructure, and integrations.

- Grey Box Testing is a combination of black box and white box testing; involves some knowledge of the internal workings but focuses on functionality.

Testing types refer to what aspect of the software is being tested, such as functionality, performance, security or usability. For example, functional testing checks whether the application behaves correctly, while load testing checks how the system performs under high demand.

In simple words, testing types define the goal, and testing techniques define the method used to achieve that goal.

While Software Testing Techniques, on the other hand, testing types refer to the different types of testing that can be performed within these test techniques.

For example, functional testing, regression testing, performance testing, security testing, usability testing, etc., are all different types of testing that can be performed within the black box, white box, or grey box test techniques.

Testing types are used to test different aspects of the software system and to ensure that it meets the stakeholders’ requirements.

Conclusion

Software testing is an important step in software development process that ensures quality, reliability and performance of the software. By understanding different types, techniques and approaches, such as manual or automated testing, teams can choose the most effective testing strategy based on project needs.

A well-planned testing process helps detect issues early, reduce risks and deliver a better user experience. Investing in proper testing processes and tools like BrowserStack and Selenium etc. leads to more stable software and greater customer satisfaction.